Credit: Ultra Heaven

Credit: Ultra Heaven

In this post, I analyze nootropics ratings I gathered through a recommender system. Jump directly to What I learned if you don’t like caveats and methodology.

INTRODUCTION #

What is a nootropic?

The effectiveness of a nootropic varies a lot from one person to another (your mileage will vary). This is why I built a nootropic recommendation system Enter ratings on nootropics you’ve tried, and it will spit out nootropics liked by people with similar rating patterns. This was initially based on the 2016 SlateStarCodex nootropics survey data. , which I suggest you try before reading this post.

Still, it is helpful to understand how good each nootropic is in general, if only to prioritize further research. Indeed, my cunning plan with this recommendation system was to incentivize people to answer a boring survey. My plan worked better than I expected, and I got 1981 people to enter 29,387 nootropic ratings Adding to it the original SlateStarCodex data, we have 36,163 ratings from 2,802 people. , which I analyse in this post. Thanks everybody!

All data and code can be found here.

RATINGS #

People interested in the code or the details of the analysis can look at the RMarkdown notebook.

How to interpret these ratings #

Scale #

I used the scale from SlateStarCodex nootropics surveys, which is:

-

0 means a substance was totally useless, or had so many side effects you couldn’t continue taking it.

-

1 - 4 means subtle effects, maybe placebo but still useful.

-

5 - 9 means strong effects, definitely not placebo.

-

10 means life-changing.

Ok but why?

Biases #

In order of importance (I think):

- Lack of random allocation

The people who entered their rating on my recommender system were not randomly assigned to try specific nootropics. Thus we can expect a (usually positive) correlation between “I’m likely to try nootropic A” and “Nootropic A might work on me”.

This means that my estimations don’t represent the ratings a random person would get on average (which would usually be lower); they’re instead a prediction of the rating that a person would give to a nootropic if they decided to take it organically. Take this into account when transferring the results to yourself.

- Self-report

You take a pill. It makes you feel good. You go on a website which asks you how good the pill is. You say it’s awesome. Little did you know that it was, in fact, merely good.

Take this into account when reading these ratings, especially if improving your mood is not your main goal.

- Lack of control and blinding

Relatedly, these uncontrolled and unblinded ratings are partly driven by the placebo effect. The sophisticated objection would be that this is probably less of a problem than for normal medications. The placebo effect seems to be in large part due to regression to the mean (when you’re sick, you’ll likely get better with time, not worse), which should not be an issue for nootropics in most cases. But the placebo effect does seem to be real for self-reported metrics, so this is probably a real issue here. Also I’m confused about the placebo effect.

All these biases sure seem inconvenient to estimate some “true rating”, but maybe they are not a problem if we just want to compare nootropics? Perhaps in some cases, but not always: lack of random allocation probably creates more bias for medications like SSRIs, which are usually prescribed to people with depression, and self-reported ratings inflation or placebo effect may be especially relevant for substances like Psilocybin, which can produce visual effects, or for hyped-up nootropics.

I’m mostly going to ignore these issues in the rest of this article, but keep them in mind and use your best judgment when comparing ratings. This also means that you shouldn’t use this data for anything more serious than reducing your nootropics search space.

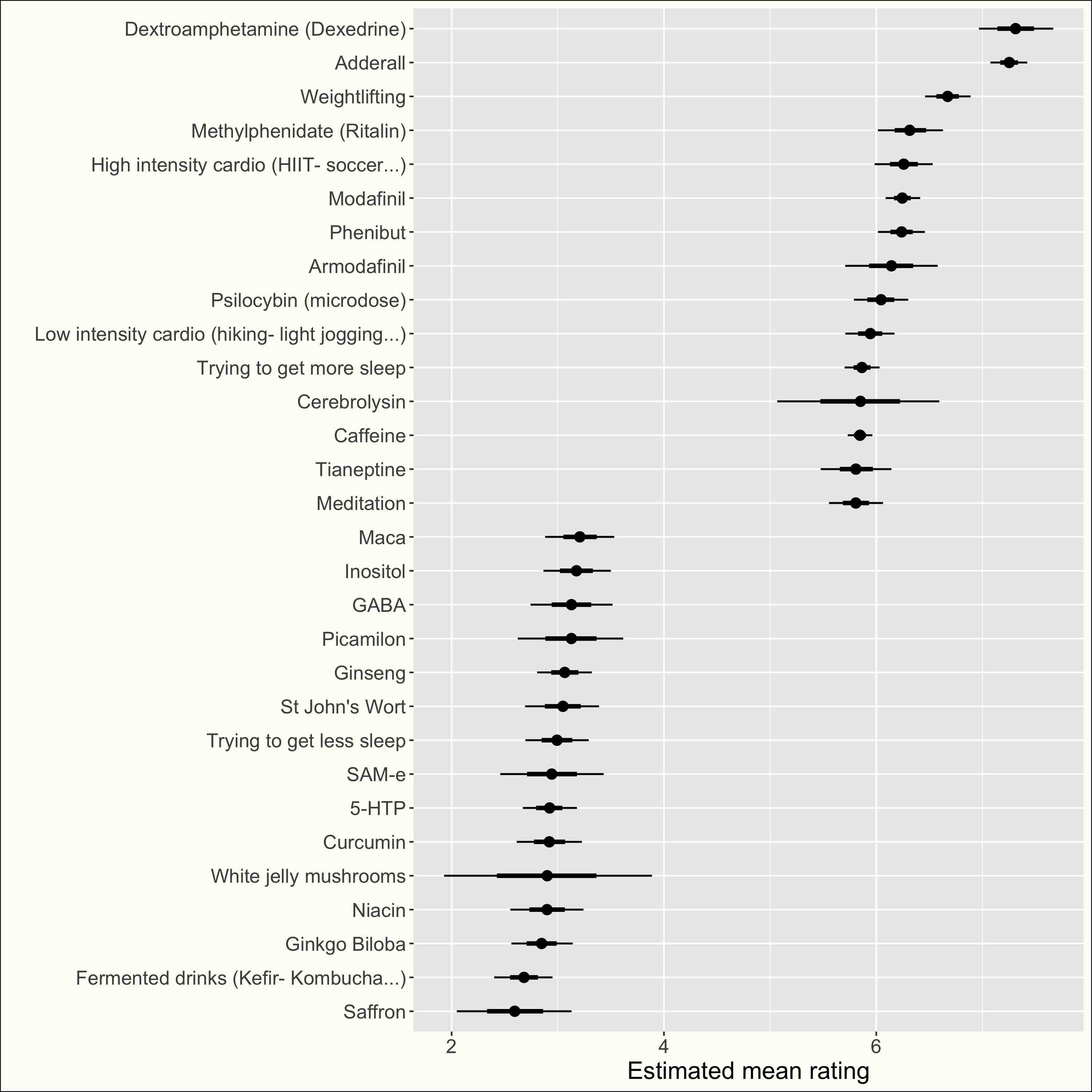

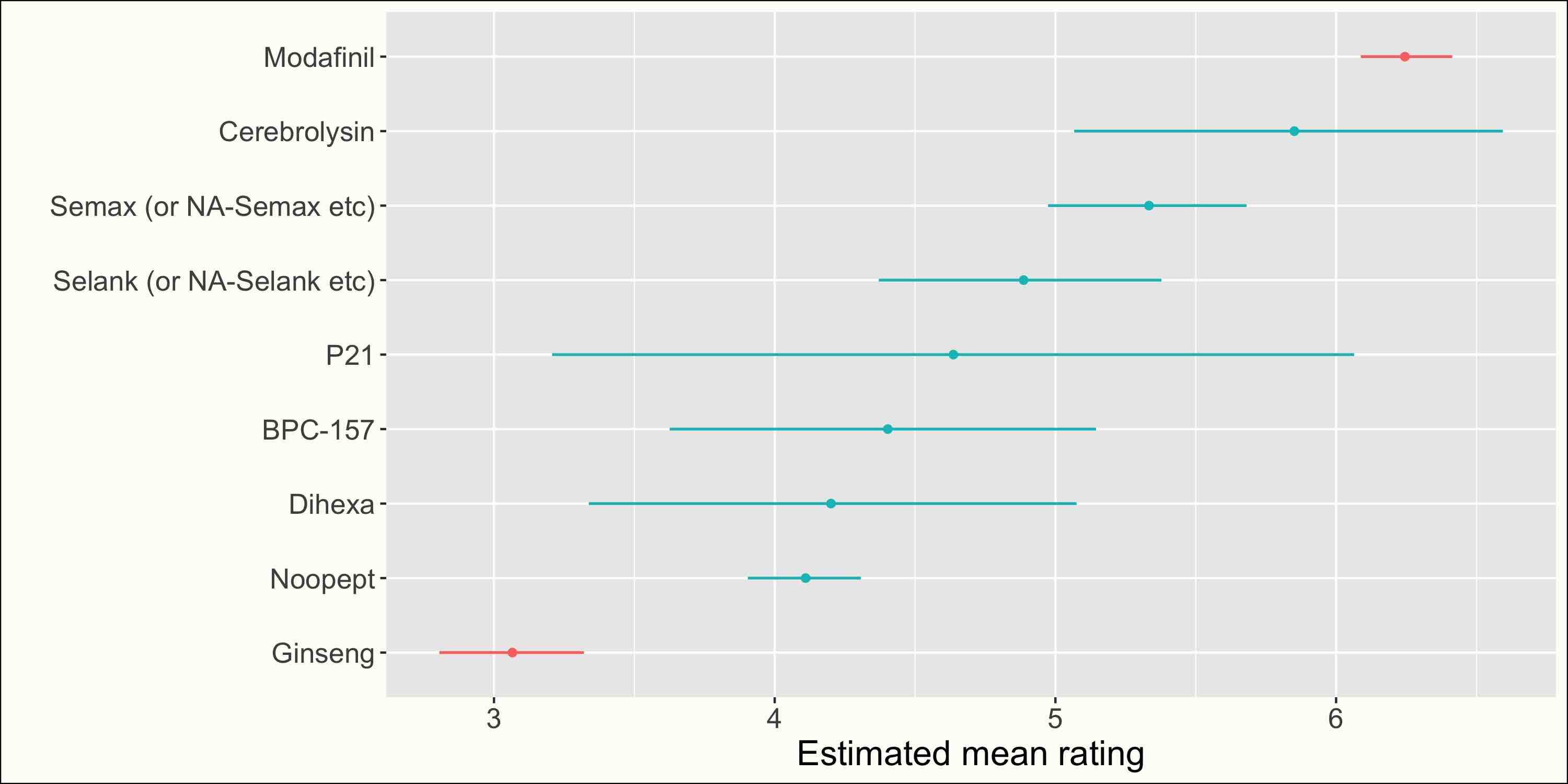

Mean rating for each nootropic #

Before plotting the mean ratings, I adjusted for the fact that different users have different rating patterns. I used a random intercept multilevel Bayesian model with weakly-informative priors. Take a look.

Here are the results (click to see all nootropics):

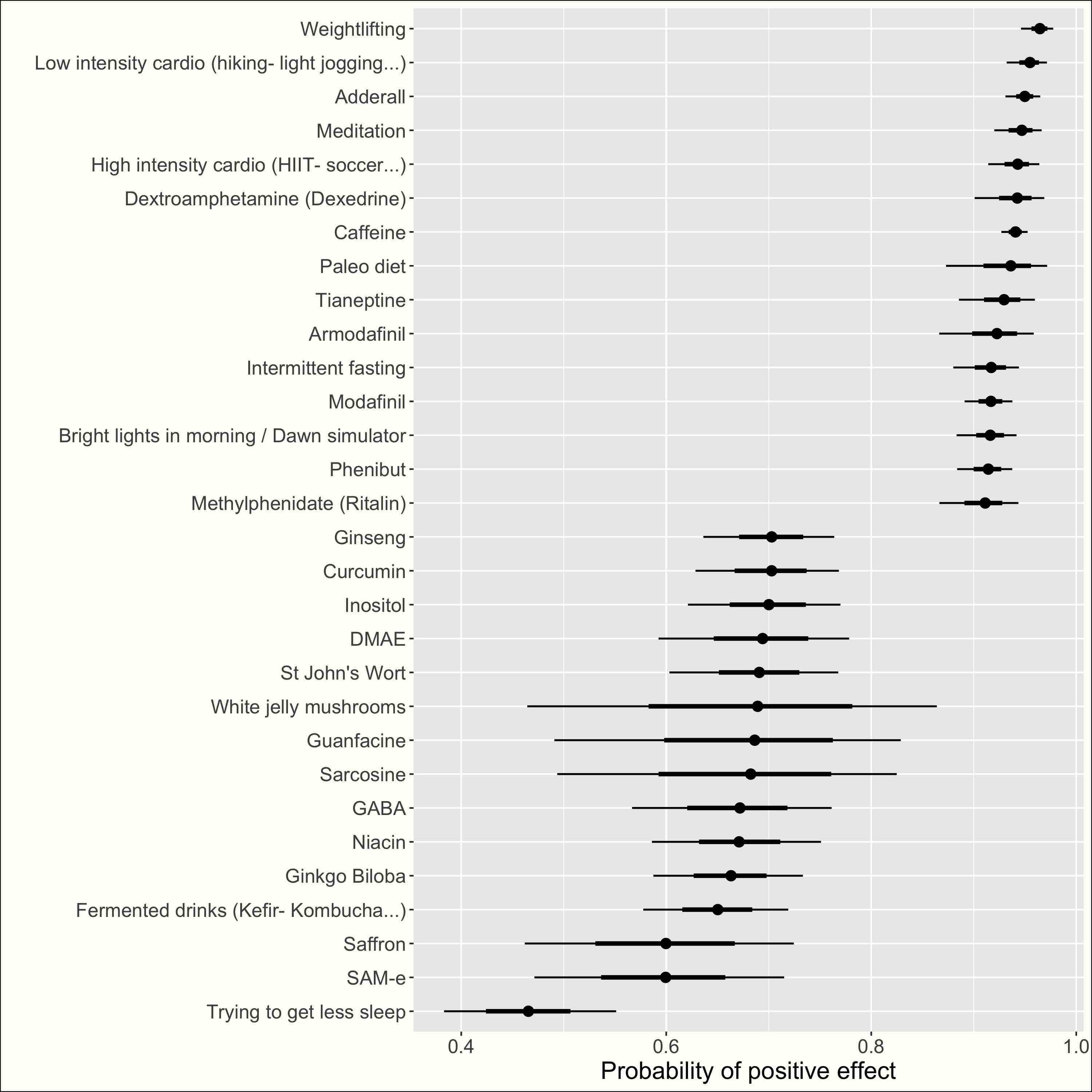

Probability of positive effect #

Given the scale I used, the estimated mean rating is not so easy to interpret. Another metric I estimated was the probablity that the effect of a nootropic on a user was positive. For my scale, 0 corresponds to a neutral or negative effect, and higher ratings correspond to more-or-less confidence in a positive effect. To make sure that my results are not biased by the people who haven’t read the scale description (and might rate a negative effect higher than zero), I considered that a nootropic had a positive effect on a user if its rating was more than the user’s minimum rating (and I removed users with too few ratings). Take a look.

Here are the results (click to see all nootropics):

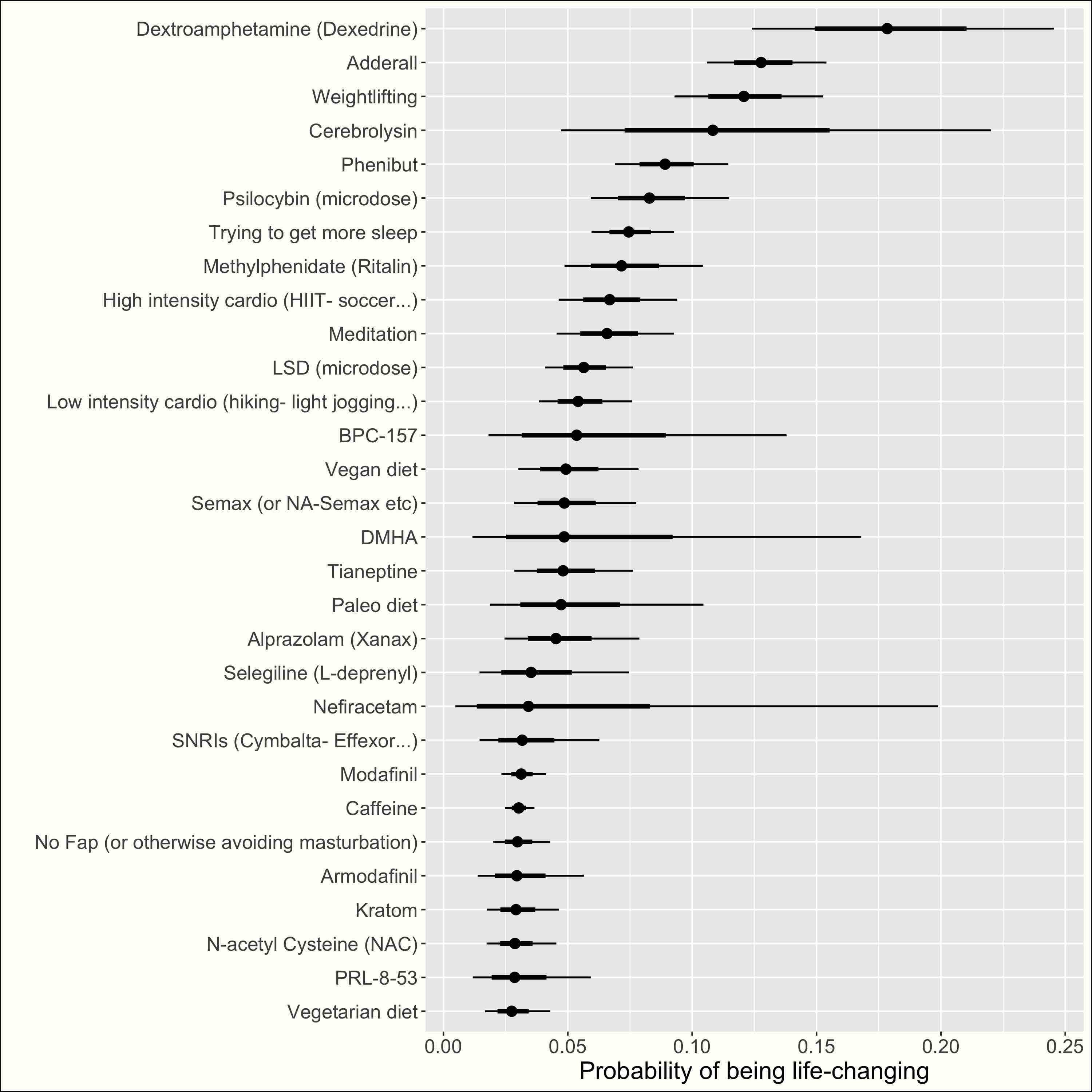

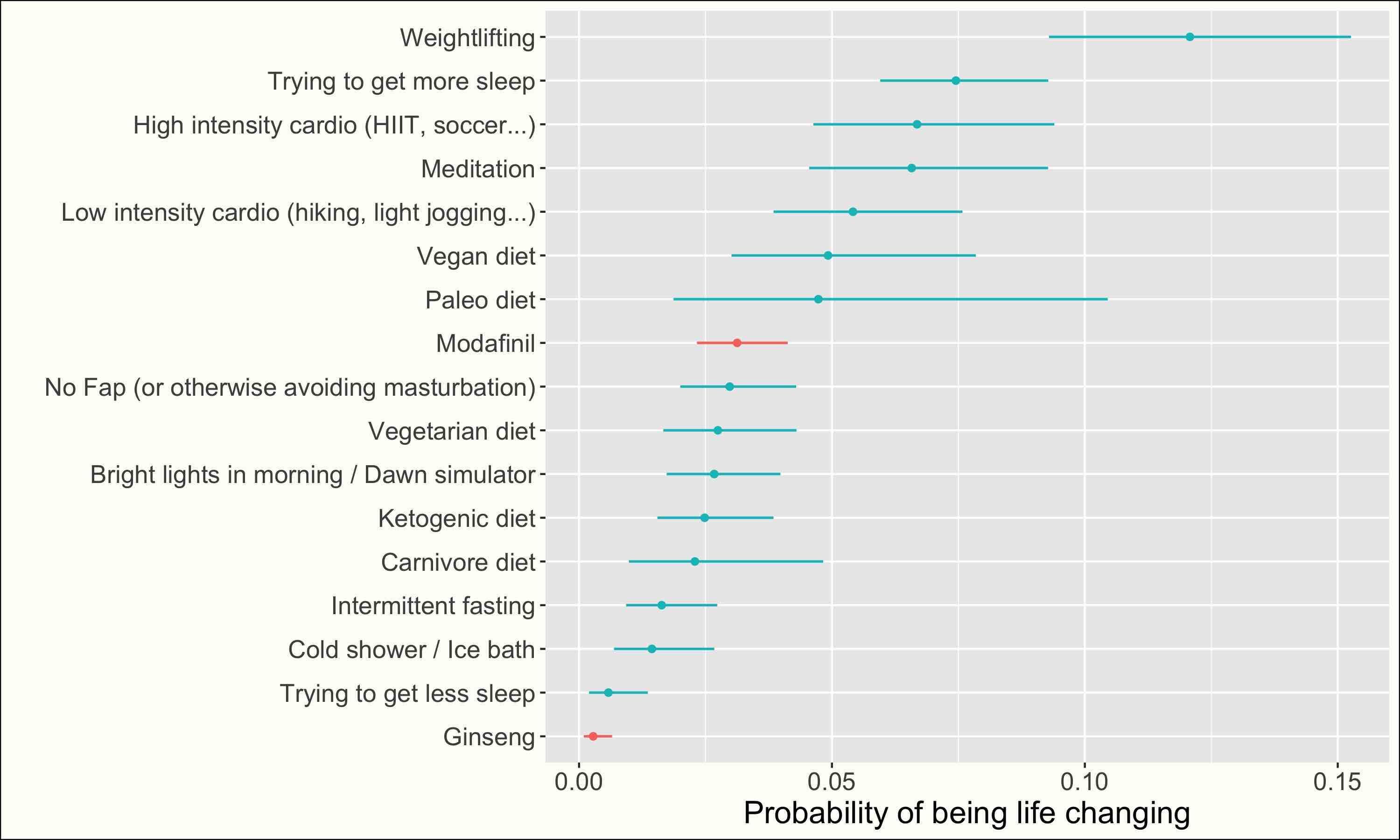

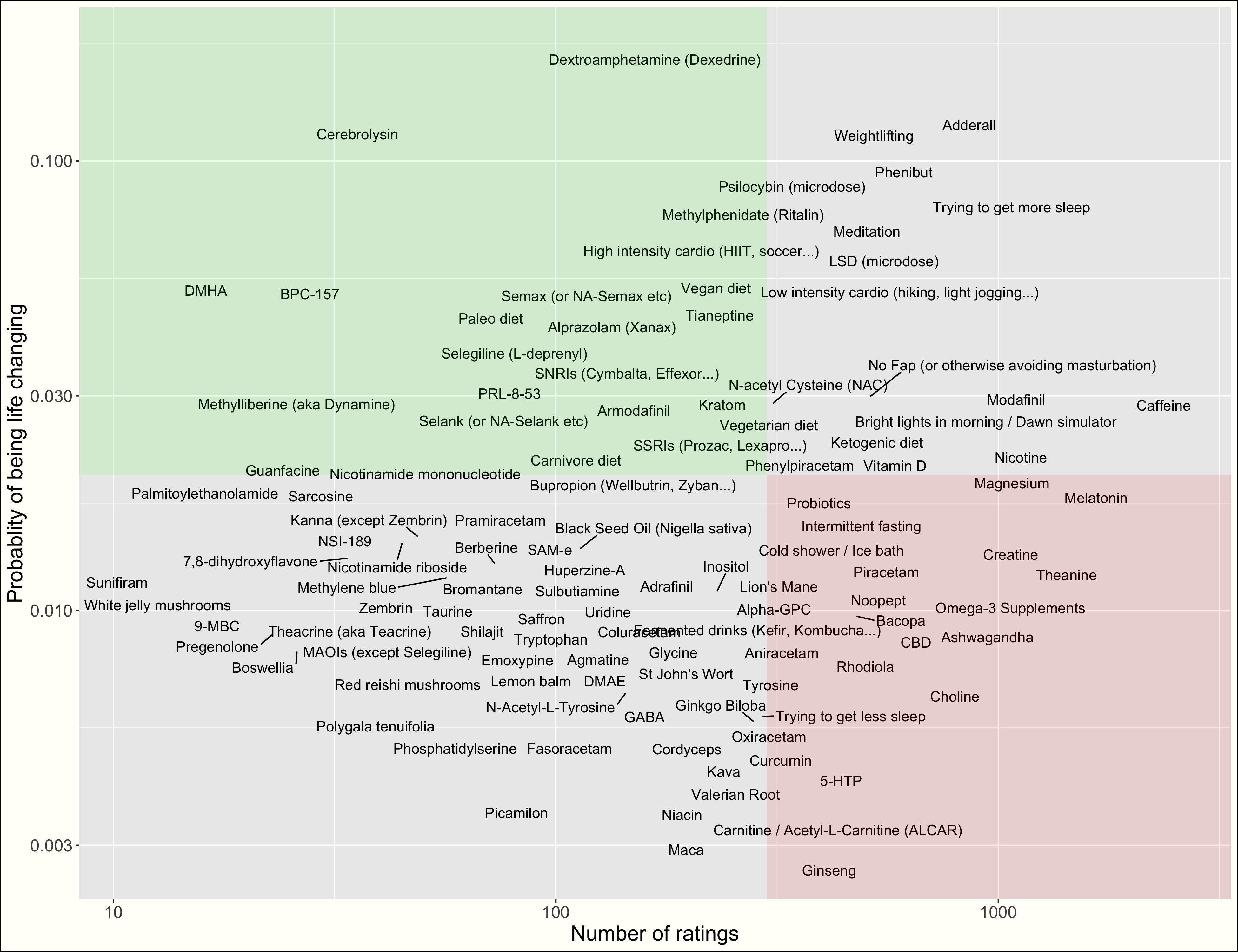

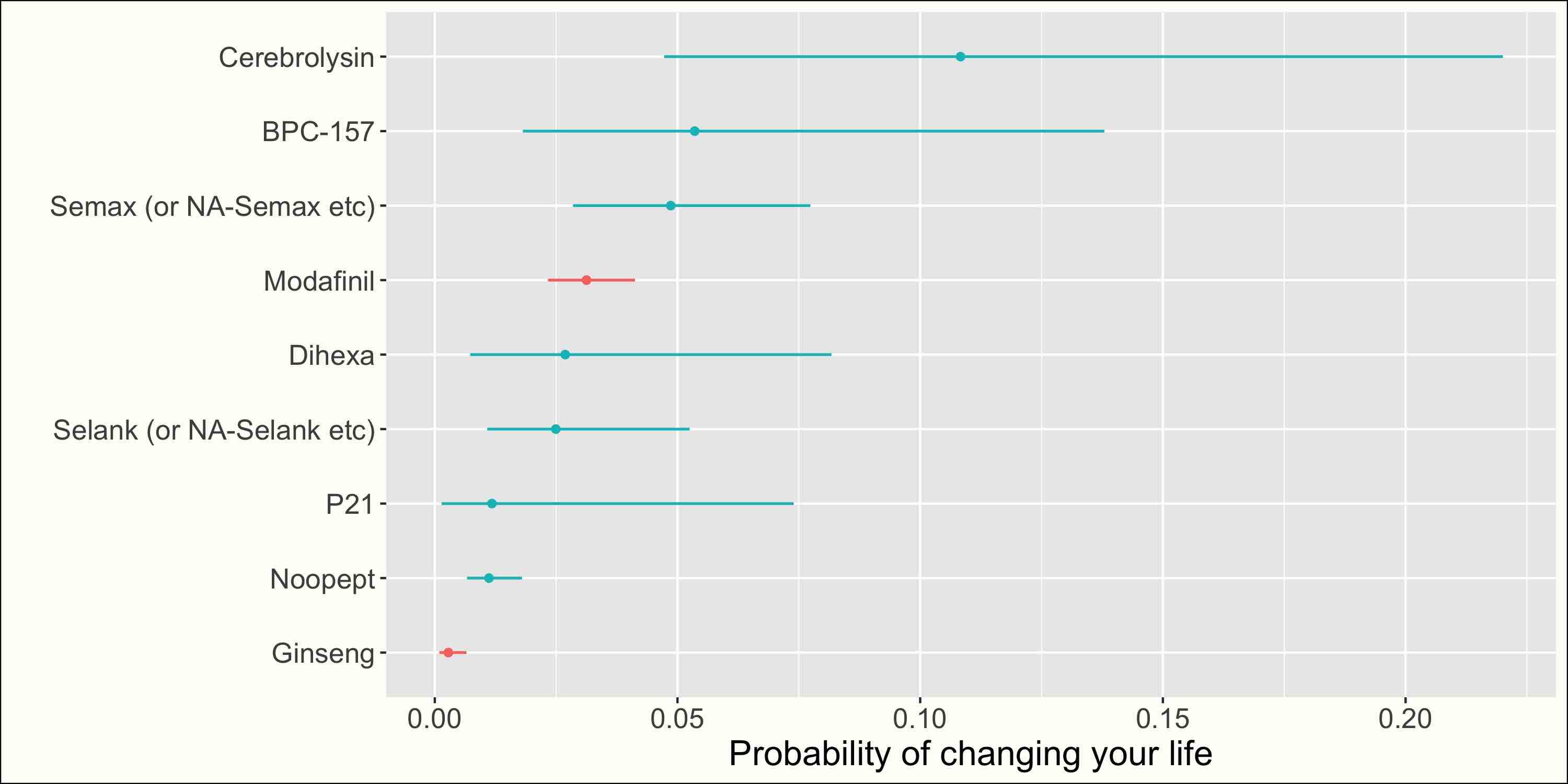

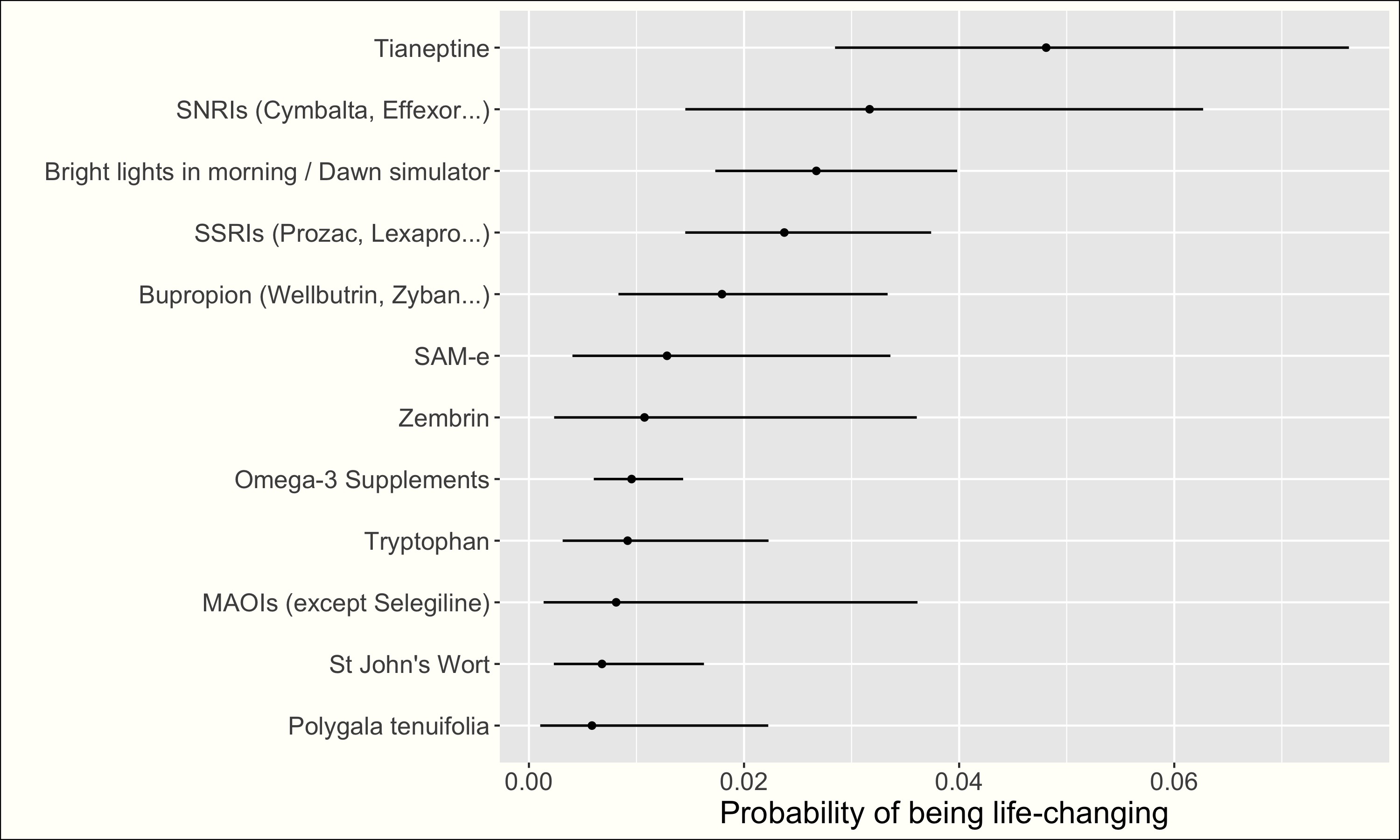

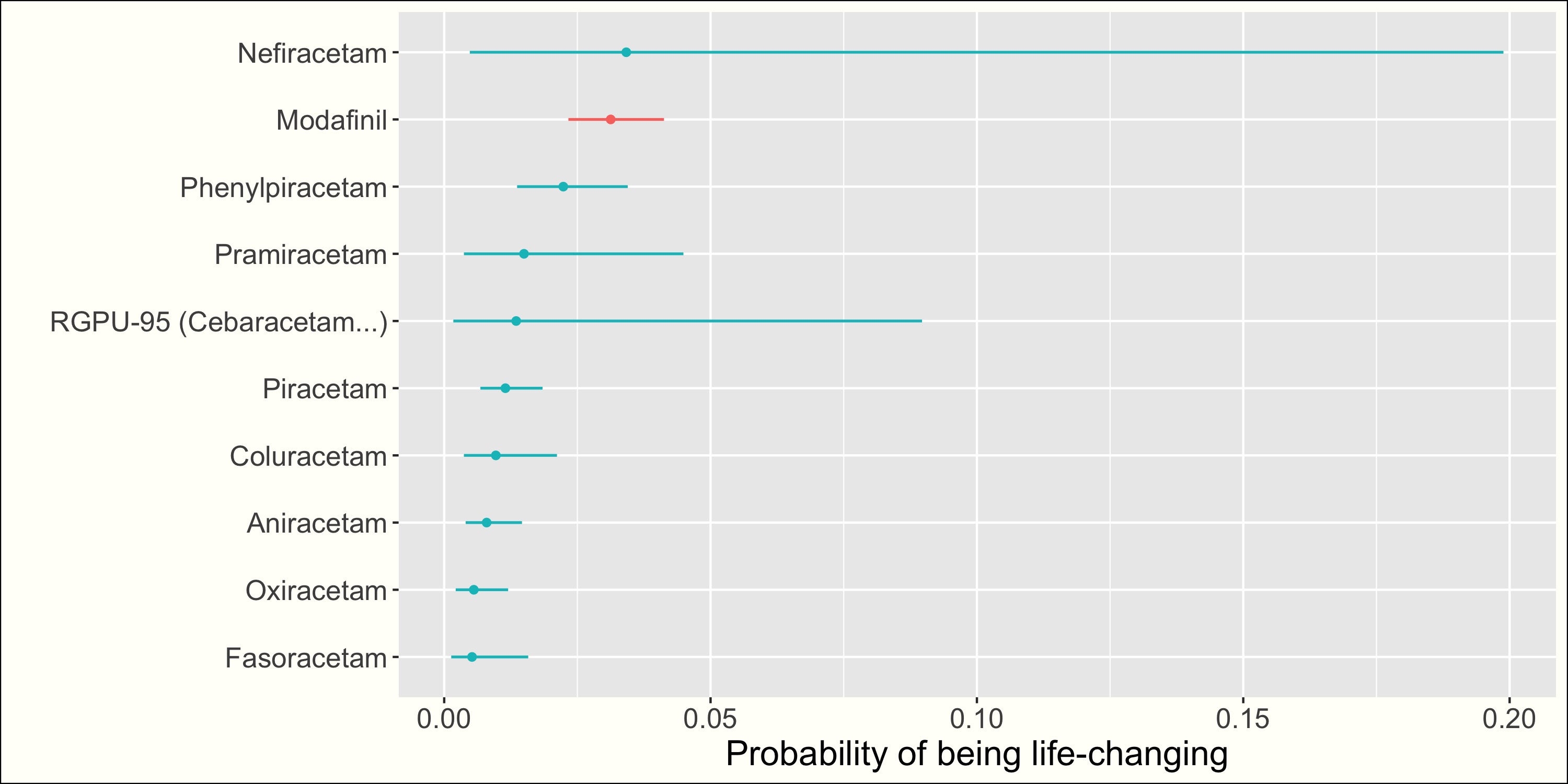

Probability of life-changing effect #

In a power-law world, it can be more useful to know the probability of a nootropic changing your life, rather than the probability of a merely positive effect. This corresponds to a rating of 10 on my scale. The probabilities estimated are probably a little bit inflated, as some people probably haven’t read the scale description and have entered 10 for a very good albeit not life-changing nootropic.

Here are the results (click to see all nootropics):

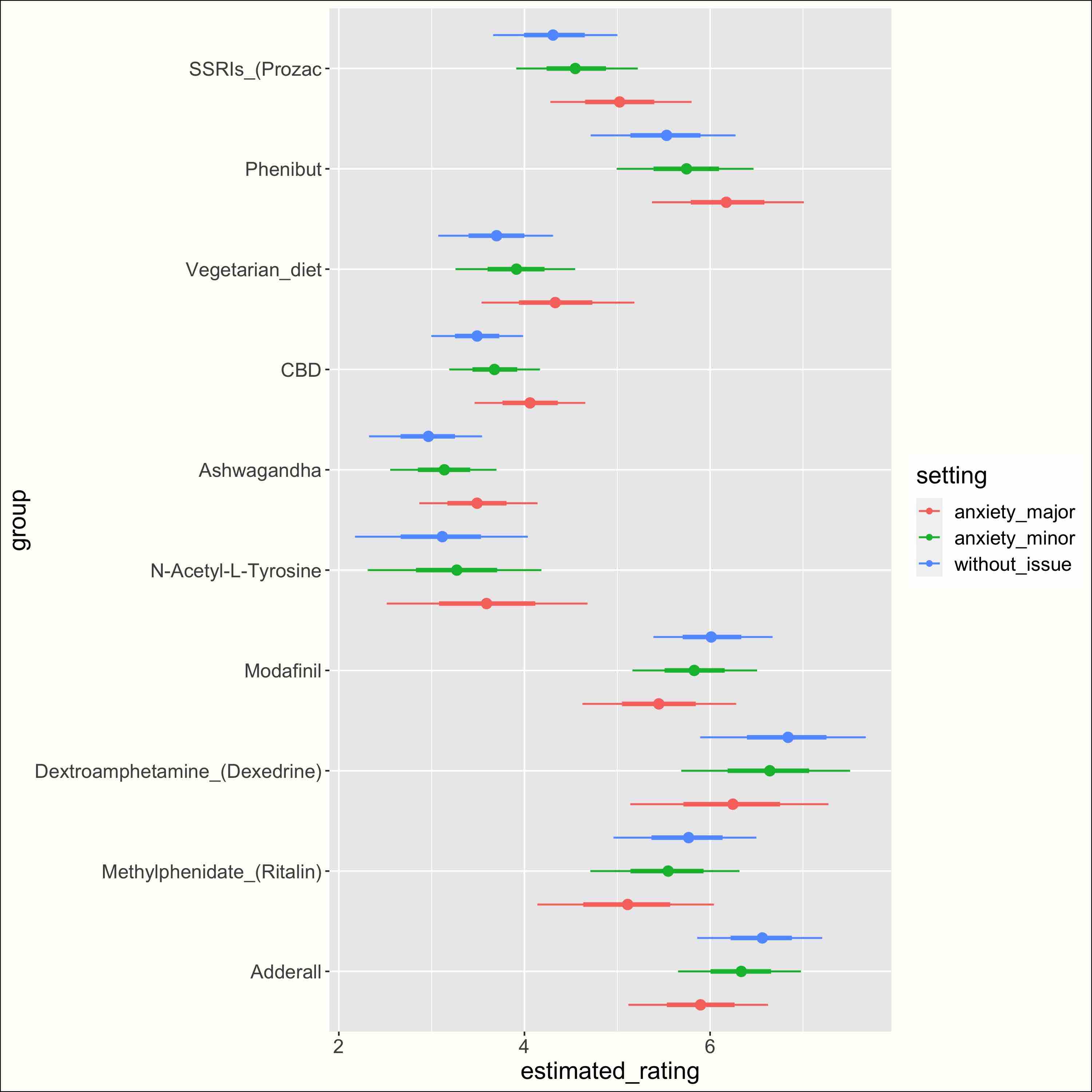

Usefulness for different usages #

With my data, identifying the usefulness of a nootropic for different use cases seems hard. I’ll set this aside for future investigation (if it’s possible at all).

More info

I haven’t asked users to rate each nootropic several times for every goal. Instead, I tried to correlate the rating for a nootropic with the indicator that a user is/was pursuing a particular goal.

For instance, here’s the estimated rating difference if a user is looking for something against anxiety:

i.e. I regressed the ratings against the variables corresponding to all use cases, with e.g. the anxiety variable encoded as 1 if anxiety was rated a minor reason for nootropic use, as 3 if it was rated a major reason. Take a look INSERT LINK.

The signs of the difference seem reasonable, but the magnitude seems weird. For instance, Phenibut is less than one point higher for people for whom anxiety is a “major reason” for nootropic use, compared to people for whom it’s not a reason. This might just be that my estimates are very noisy This can also be due to “Not a reason” being the default choice, though I removed people who hadn’t entered any reason. , but I would also worry that my estimates would not be causal. For instance, I think I spotted a case of reverse causality: SSRI (notorious for creating undesirable sexual side effects) are rated higher by people for whom libido is a major reason for nootropic use. I guess the causal graph looks like “I like SSRI” –> “I continue taking SSRI” –> “I have libido issues” –> “I’m looking for a nootropic to improve my libido”. This one is easy, but I wouldn’t know how to interpret it if I found that some nootropic was unexpectedly good for anxiety.

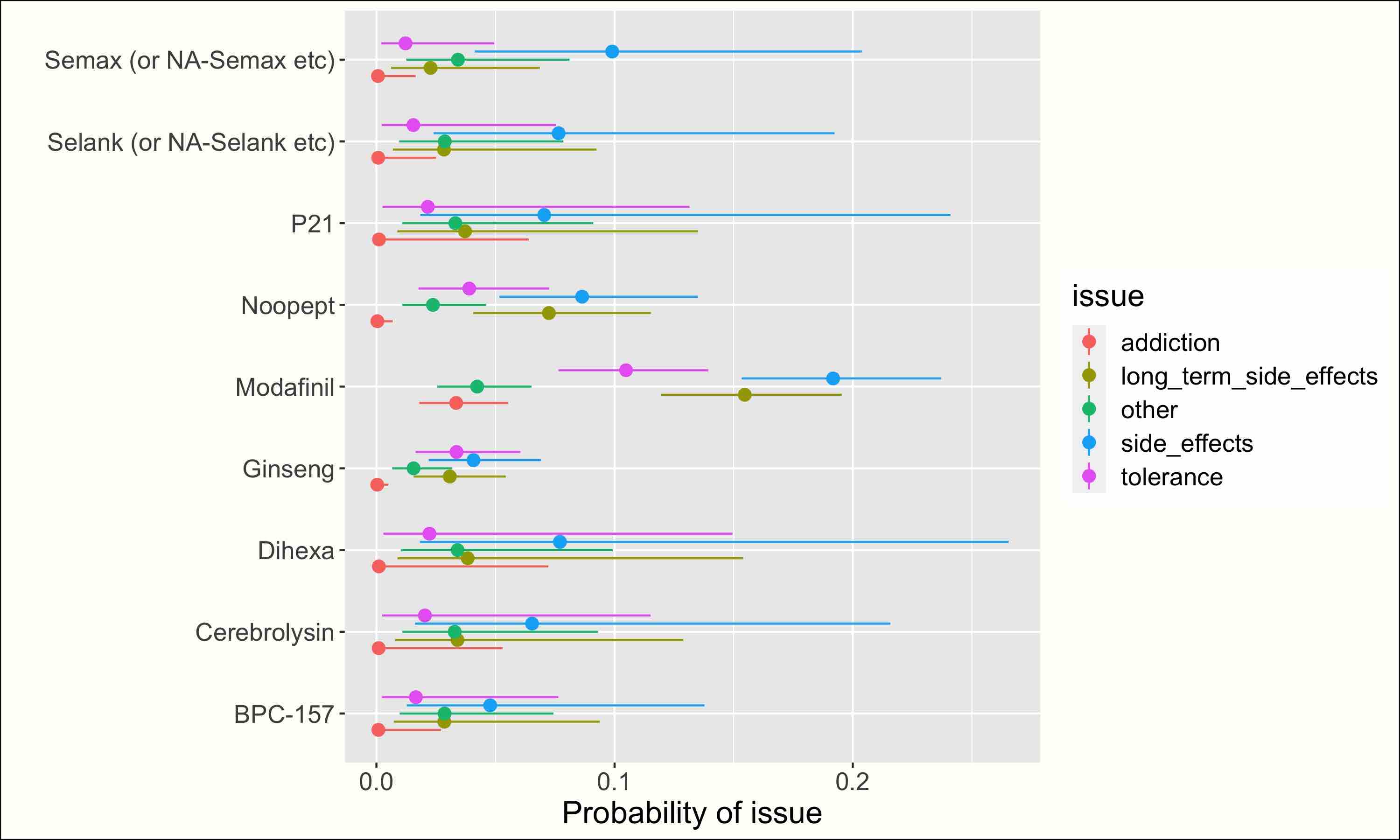

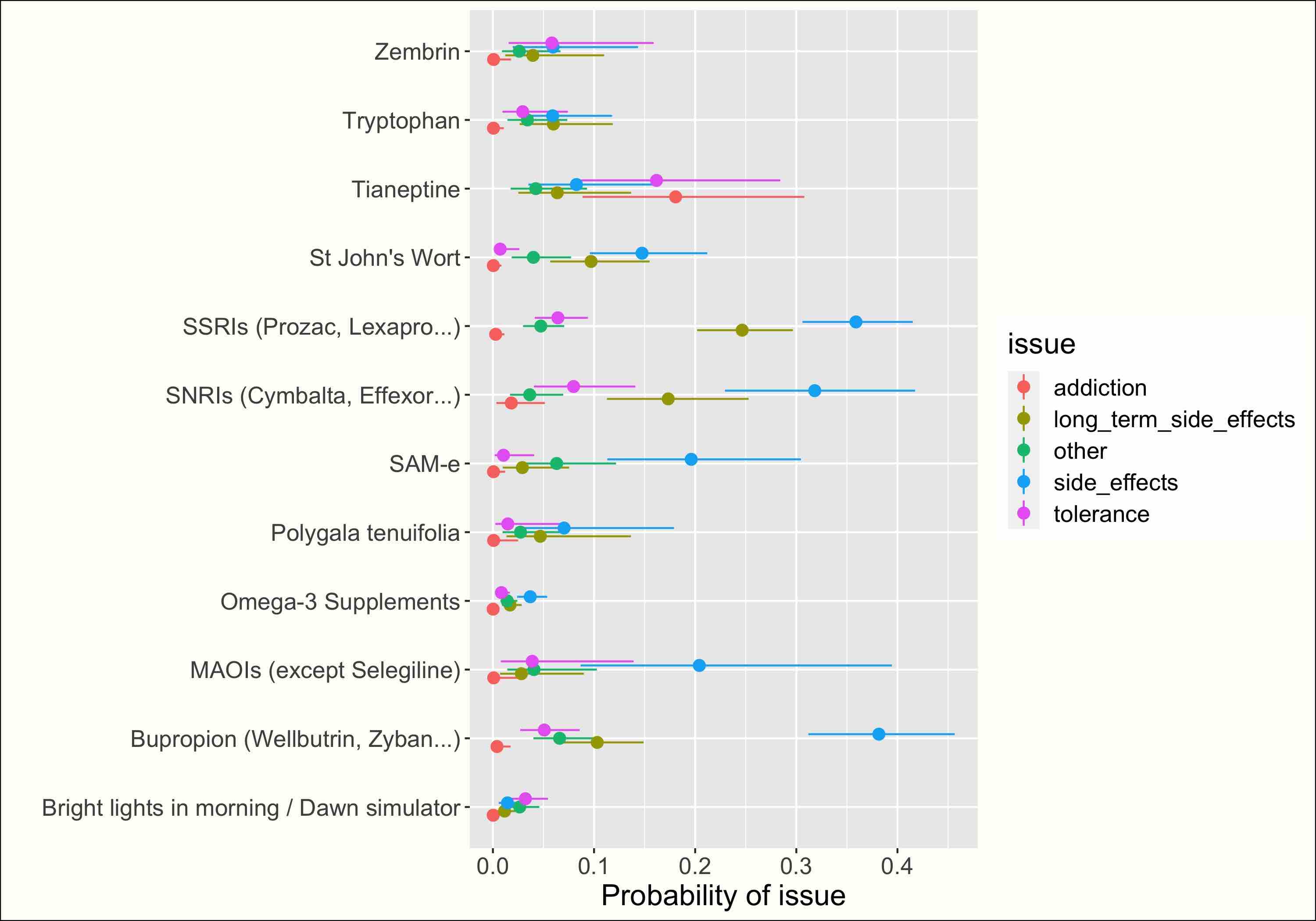

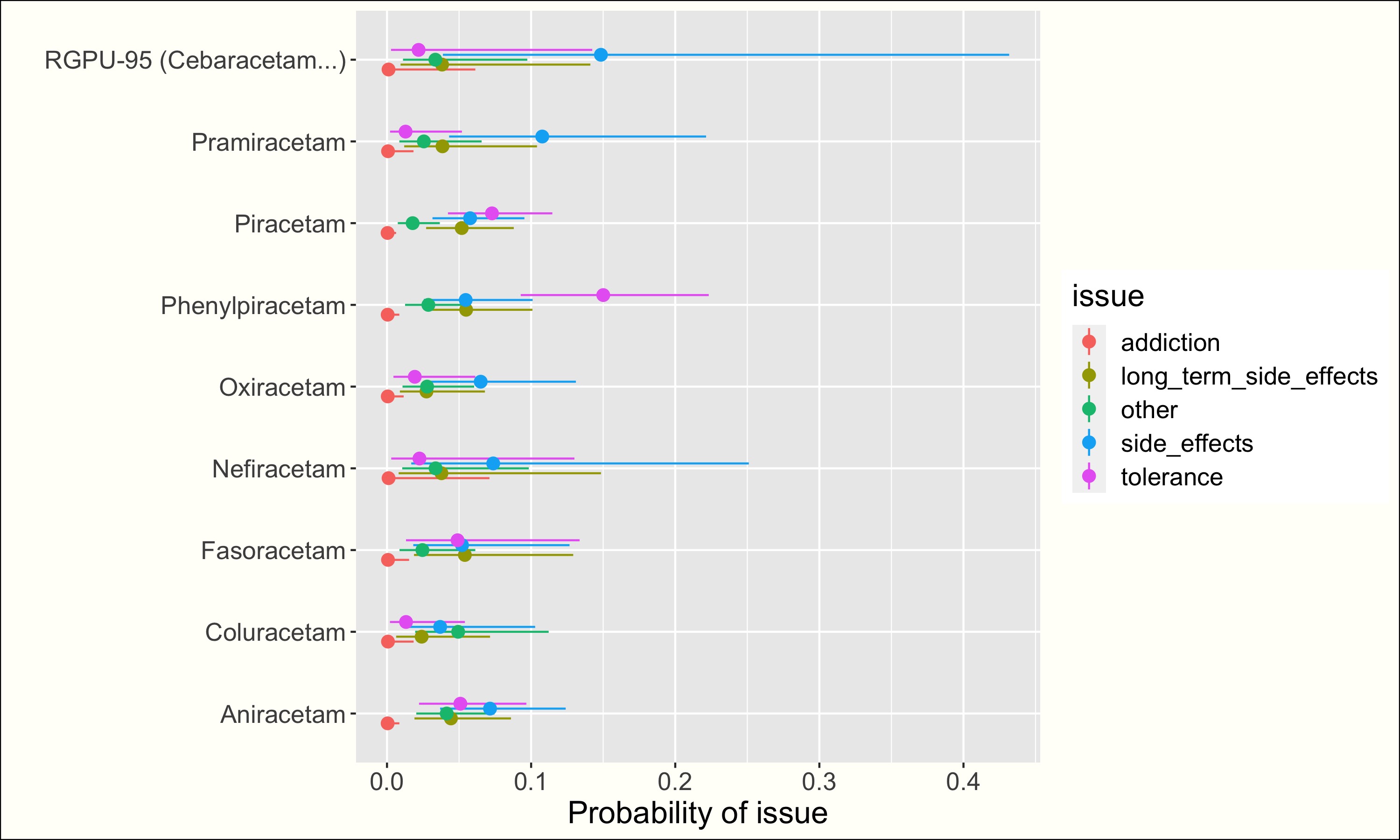

RISKS #

The risks of prescribed medications such as Adderall, SSRIs and Modafinil are quite well-documented, but information on the risks of weirder nootropics is scarce.

For instance, what would you guess is the probability of becoming tolerant to Phenylpiracetam (apparently between 10 and 20%)? Of becoming addicted to Kratom (apparently between 15 and 25%)?

I tried different things I tried to create reasonable bounds, and then built a probabilistic model including non-response behaviors, checking that it seemed to stay within my reasonable bounds. Take a look. to account for non-response bias (many people didn’t enter the issues they had). But beware: the figures in this section are more uncertain than the ratings in the previous section and may depend on some modeling assumption. One thing that can be misleading: my estimates are a weighted average between the baseline probability of each issue and the specific probability of an issue for a nootropic. When the sample size is very small, the model basically returns the baseline probabilities. This can be misleading if you believe that a nootropic is not typical. (In other words, if you have information about a nootropic, you’re no longer in a setting where nootropics are exchangeable, which is an assumption behind my hierarchical model). . If you want more reliable results, you can get reasonable bounds on issues probability in the RMarkdown notebook, where I also present the model I used in the sections below.

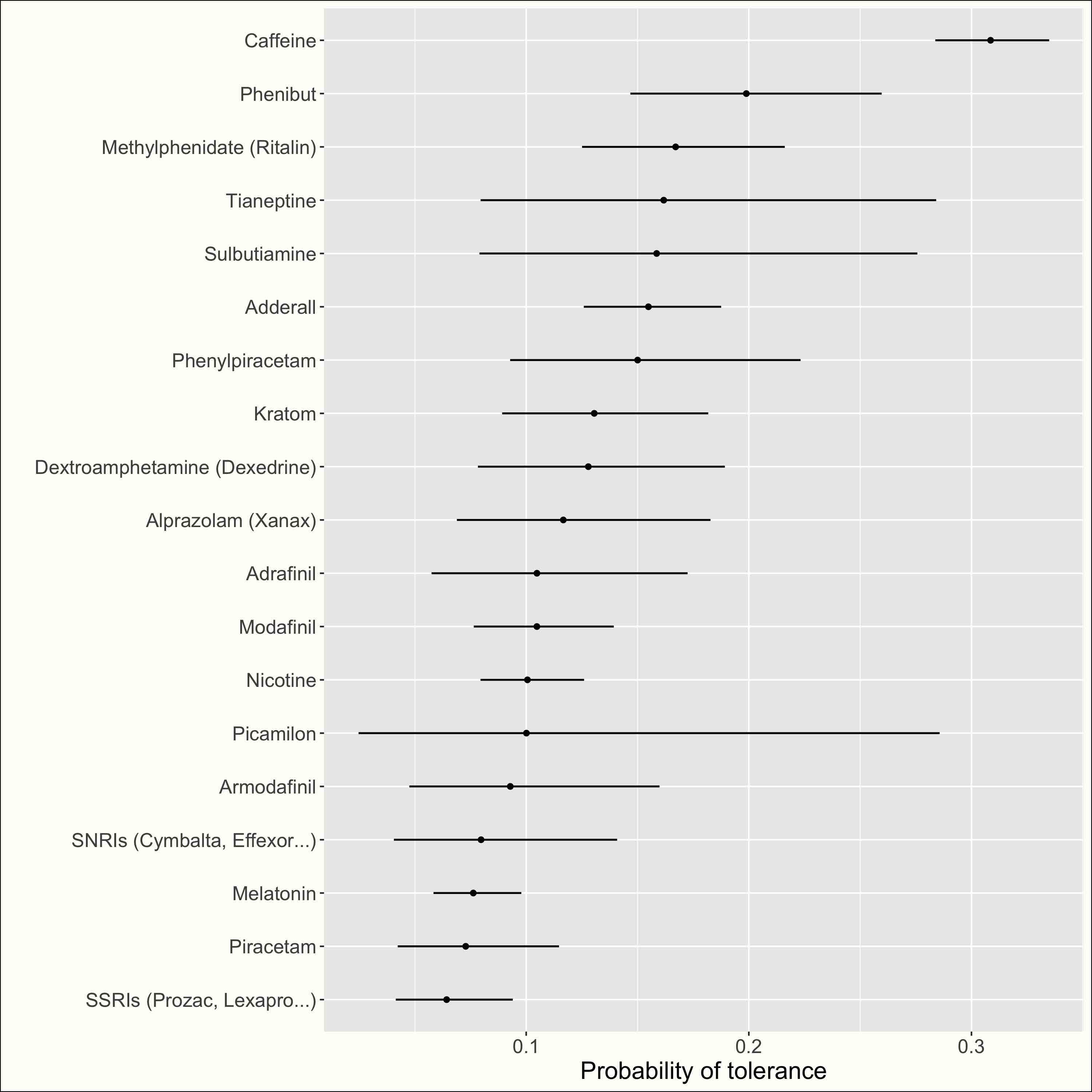

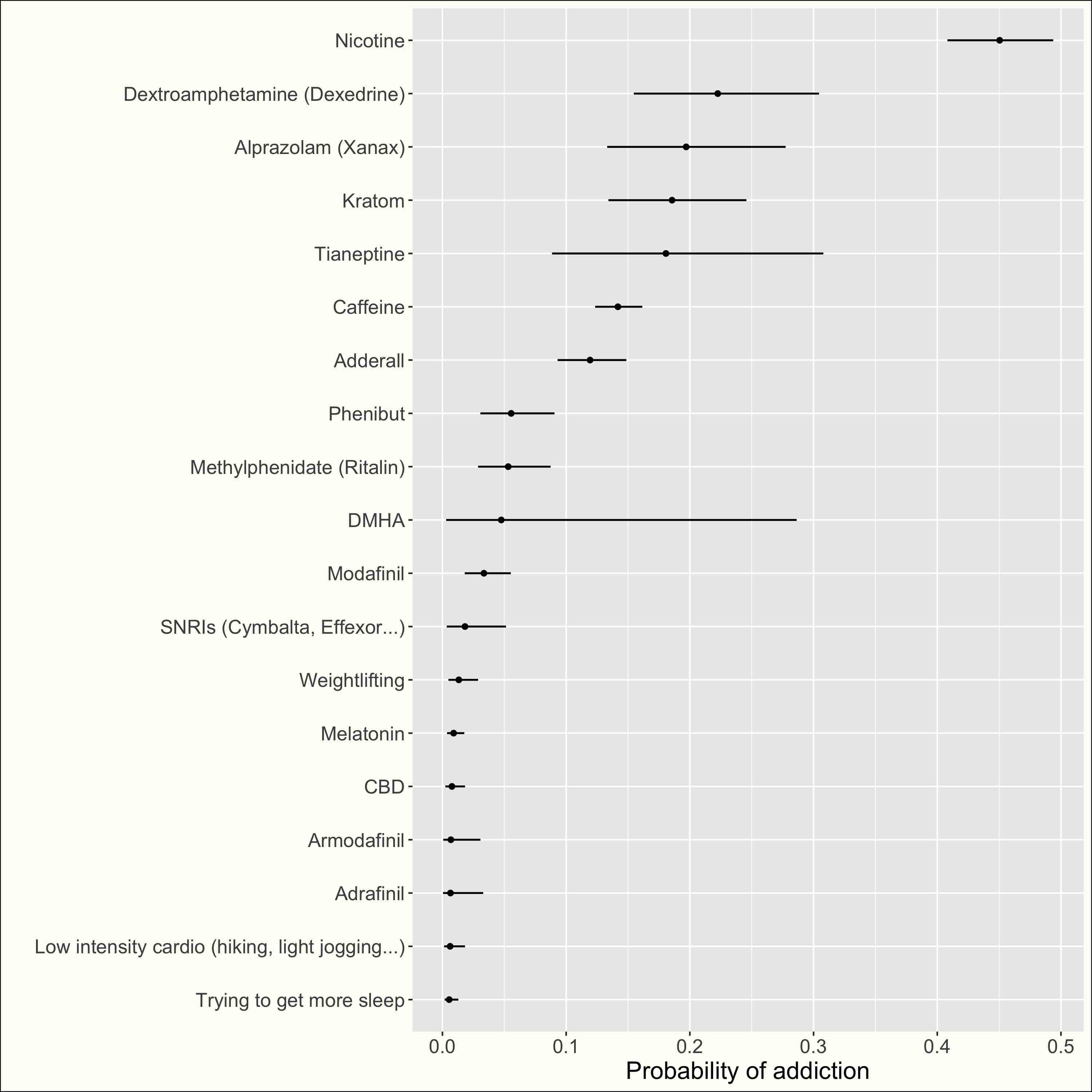

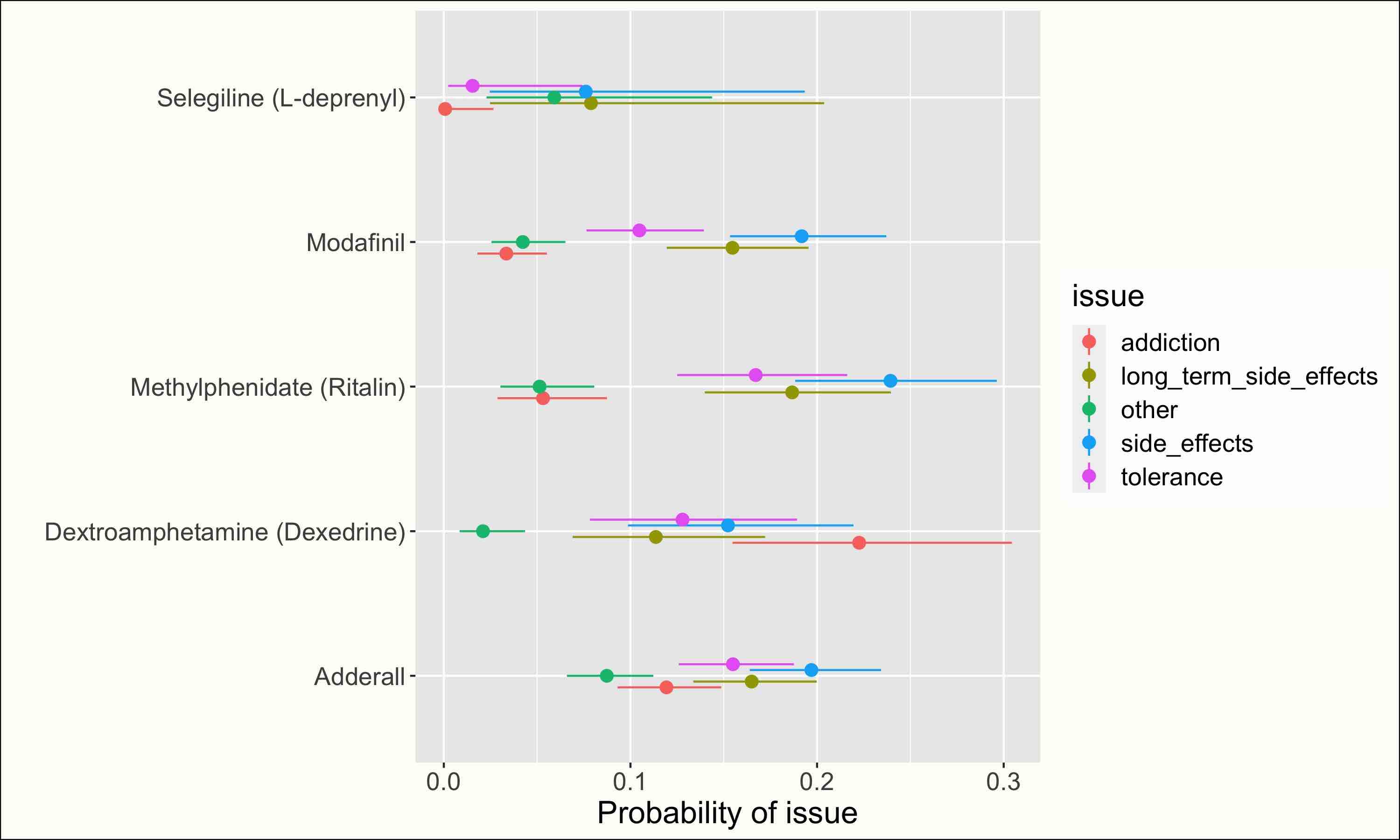

Addiction and tolerance #

Click to see all nootropics:

Click to see all nootropics:

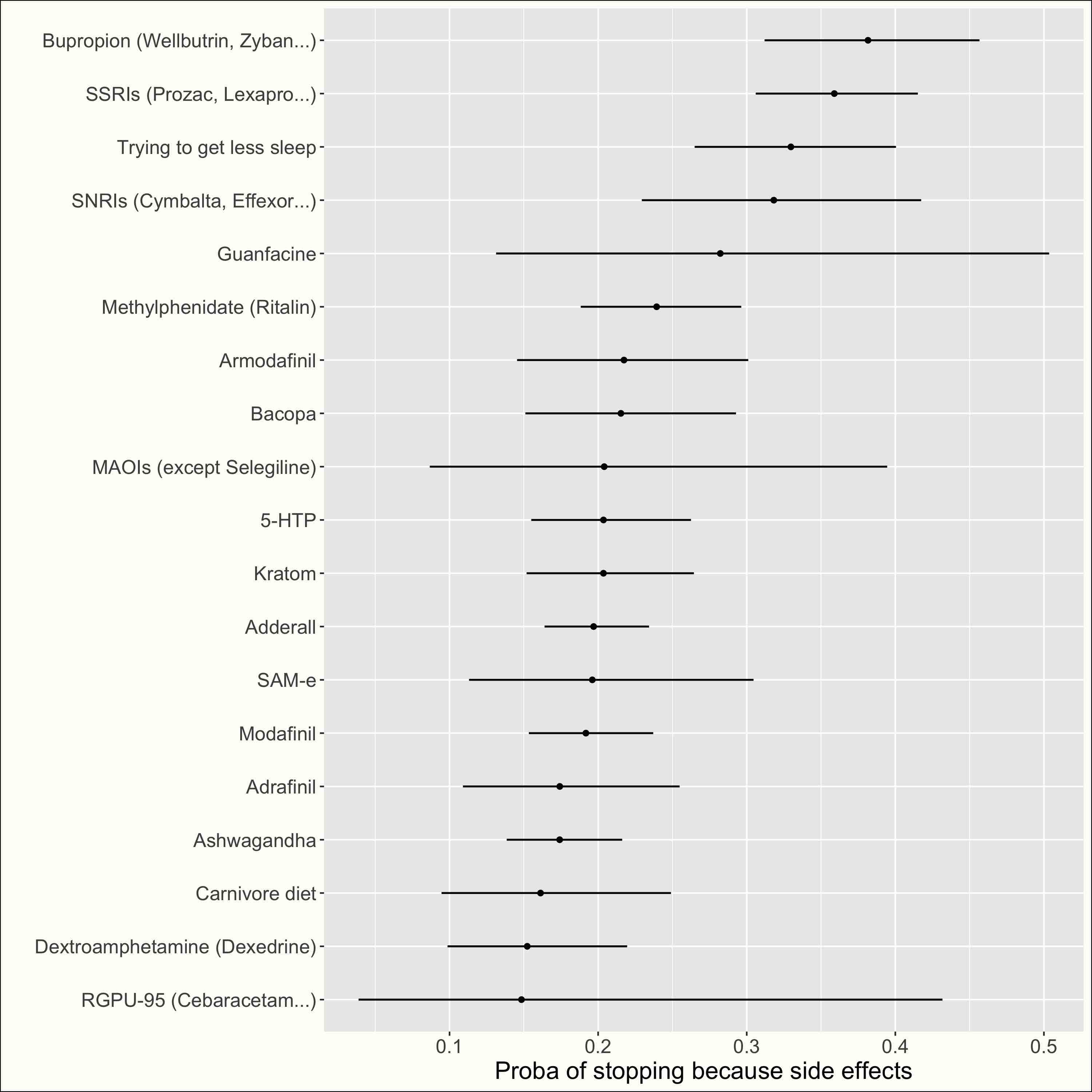

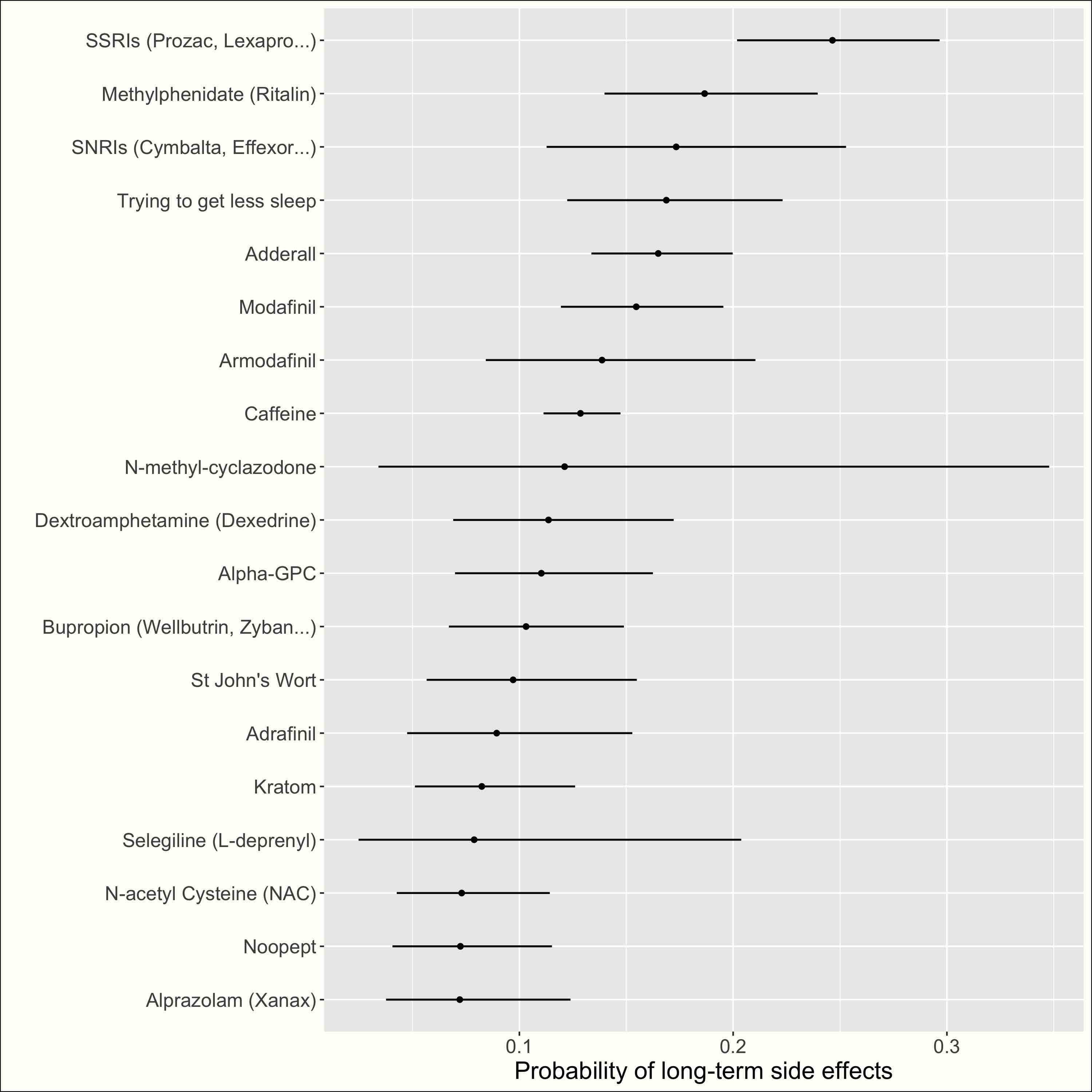

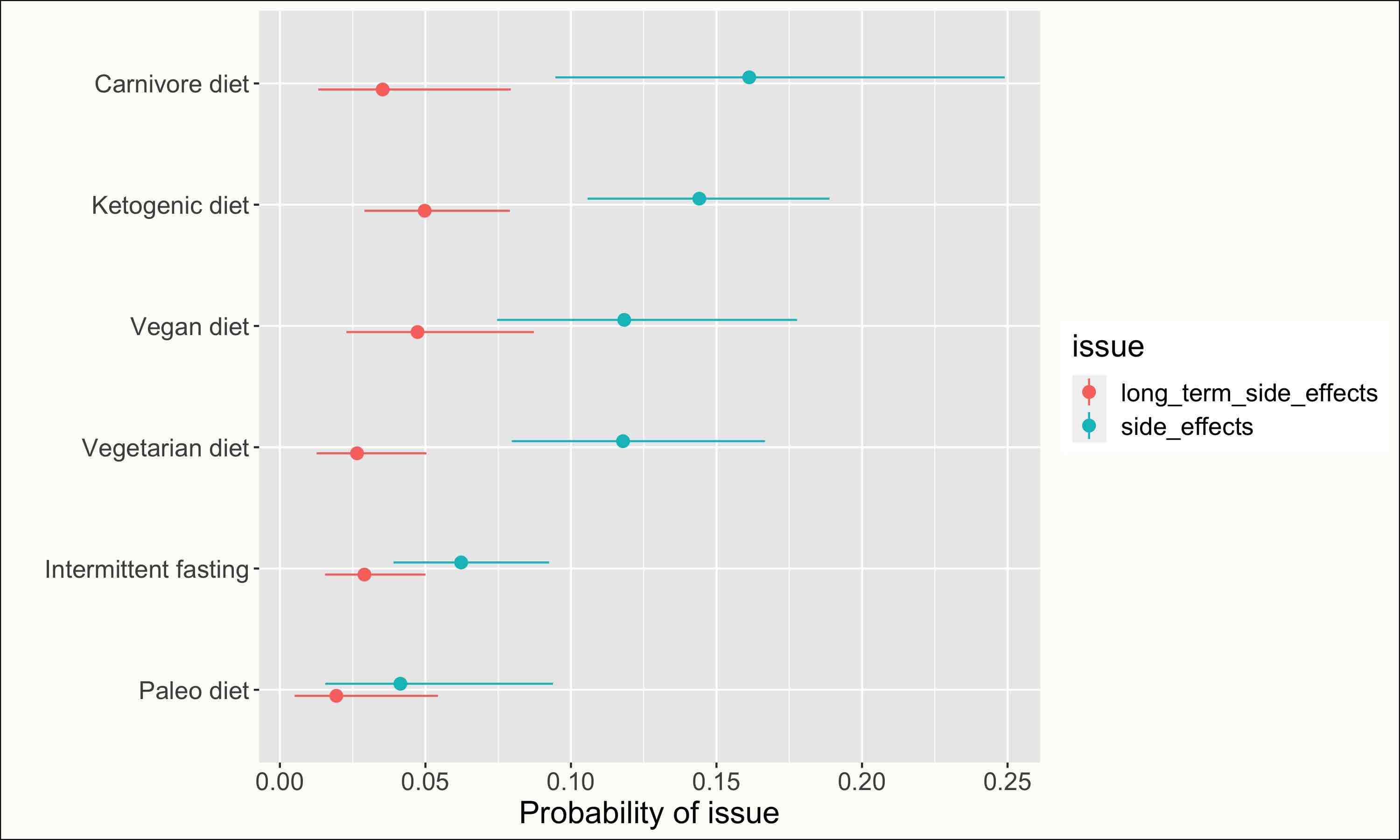

Side effects #

Click to see all nootropics:

Click to see all nootropics:

EDIT: As pointed out by a commenter, the estimated probabilities of long term side effects are somewhat surprising. I’m not completely sure what people had in mind when entering “long term side effects”, and thus I’m not completely sure how to interpret these probabilities.

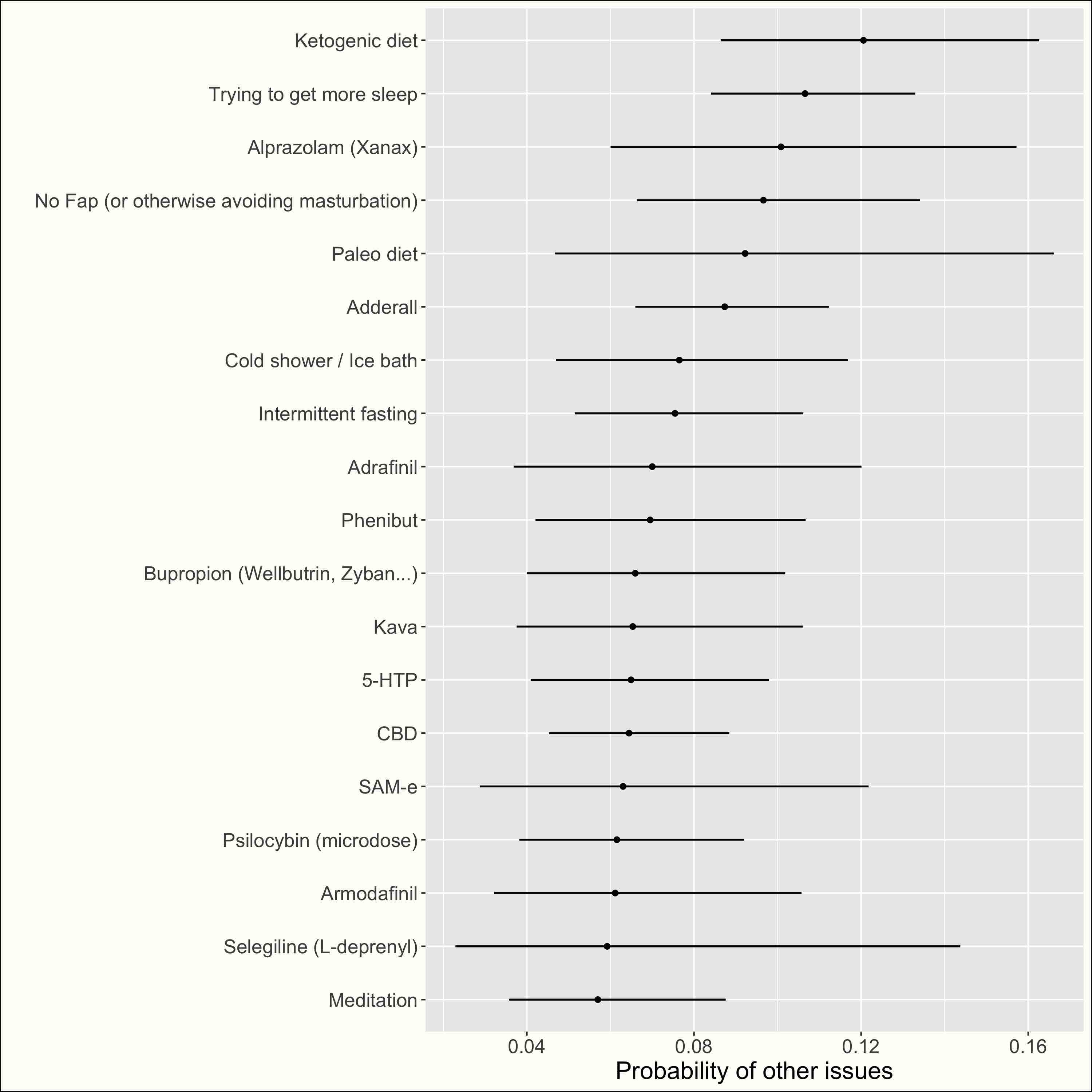

Other issues #

Click to see all nootropics:

WHAT I LEARNED #

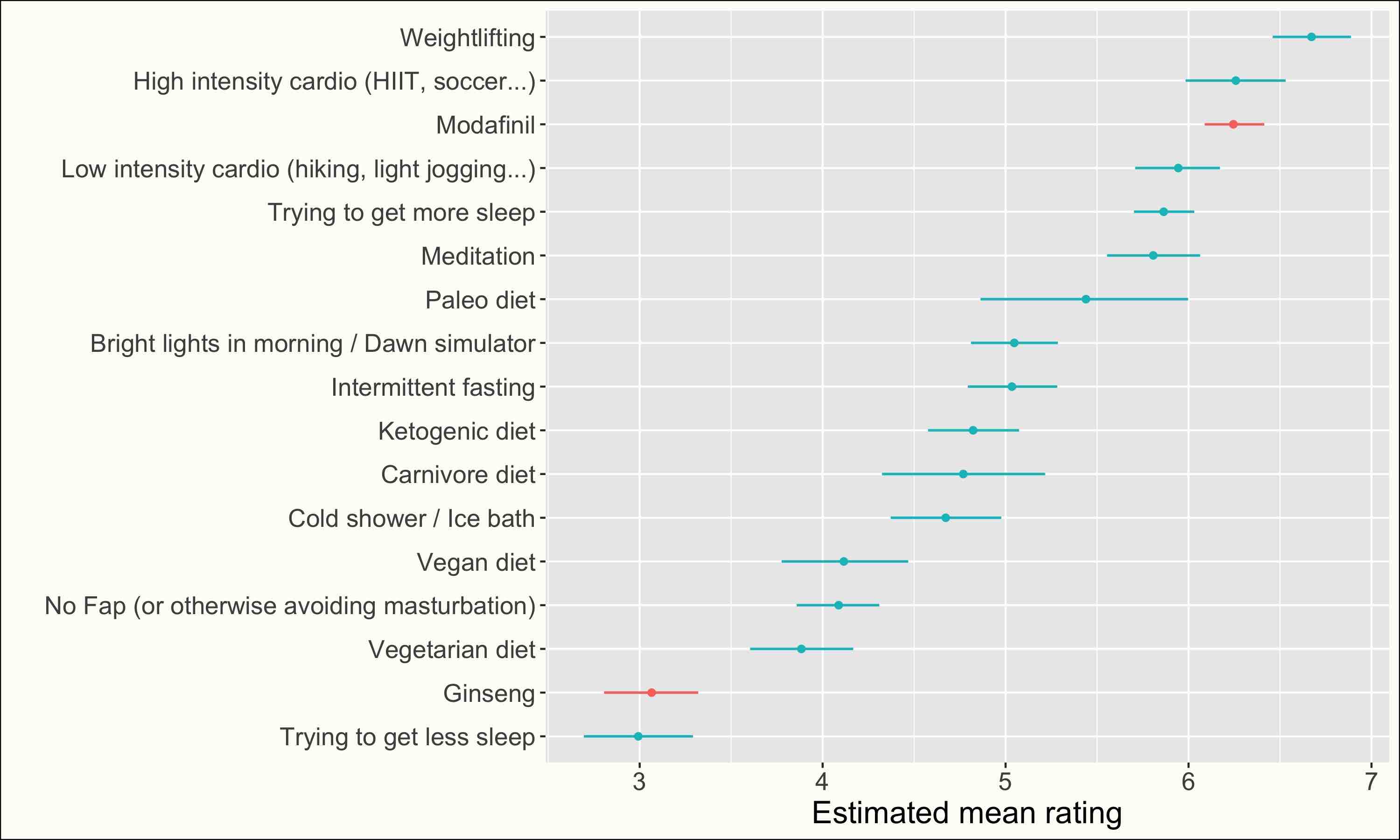

Lifestyle is a strong nootropic #

My recommender system includes some “lifestyle changes” like sleep or sport I asked people to rate only their cognitive effects. , and this kind of “nootropic” is very, very highly rated.

See ratings

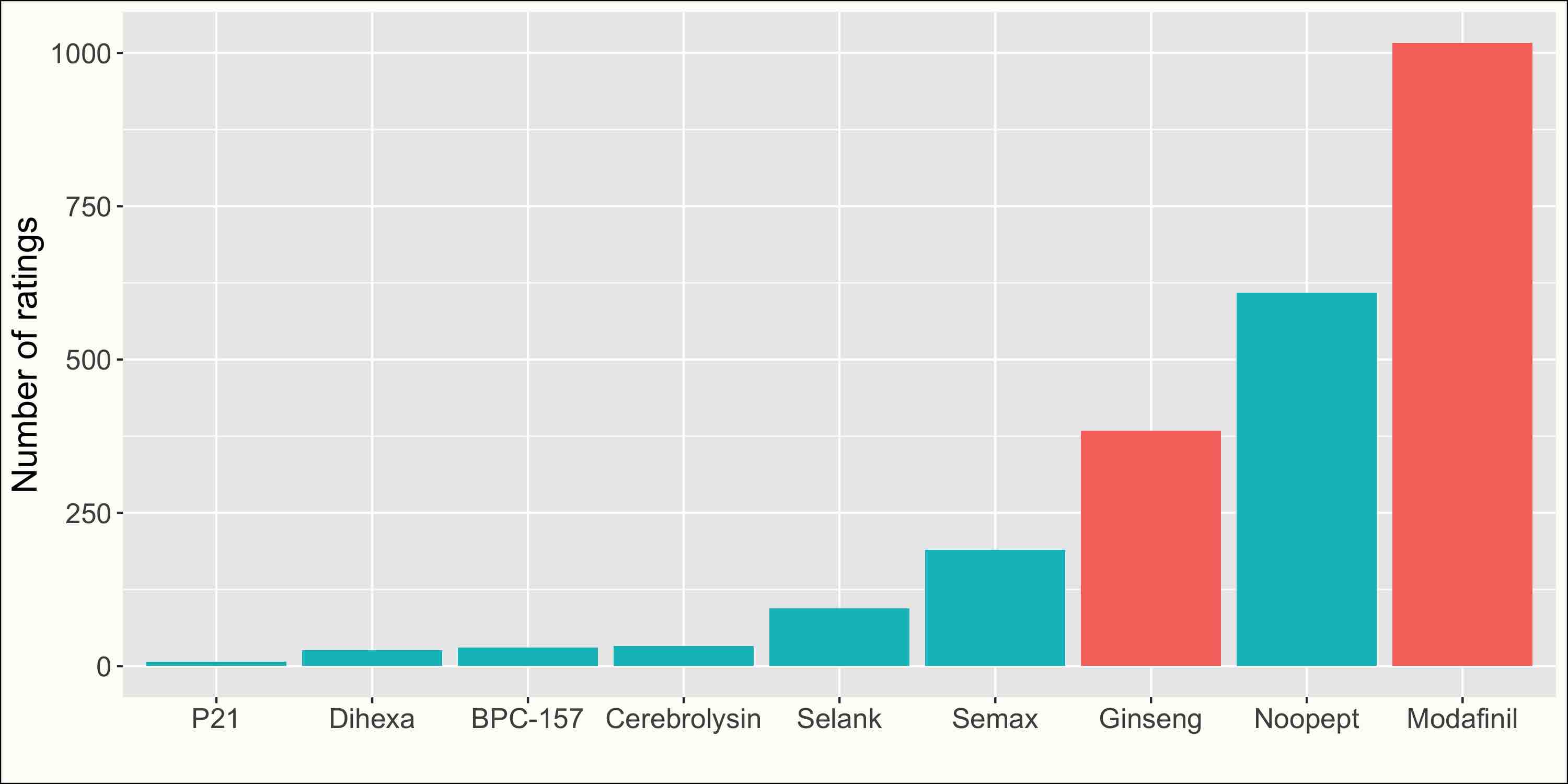

I’ve included Modafinil (very highly-rated nootropic) and Ginseng (weak nootropic) for comparison.

Sport and sleep #

Some people like to make fun of the nootropic community for tirelessly experimenting with weird chemicals instead of fixing their sleep schedule and going out for a run To be fair, this is literally the first advice on r/nootropics . Unfortunately for me, they may be right. I knew sleep and exercise were very important, but I was still surprised to see them so high, even compared to powerful prescription medications like Adderall and Modafinil: sleep and all sport categories are in the top-10 for every metric, weightlifting and low-intensity exercise are ranked 1st and 2nd for “probability of having a positive effect”, and weightlifting is ranked 3rd for “probability of changing your life”. Among the different sport categories, “high-intensity exercise” (HIIT, soccer…) is rated a bit higher than “low-intensity exercise” (hiking, light jogging) though the difference is small.

Weightlifting #

Among the different sport categories, weightlifting is noticeably better rated (+ 0.5 points on adjusted mean) and is actually among the very best nootropics in my database. Furthermore, very few issues are reported. This is very impressive, and maybe you should consider trying it. You may be wondering why you should trust this self-reported data if you don’t trust your gym bros friends who can’t stop talking about how weightlifting changed their life. I’m just saying that there are a lot of people saying the same things in my data. Maybe they’re all the same gym bros, but maybe it means that you should start taking them seriously.

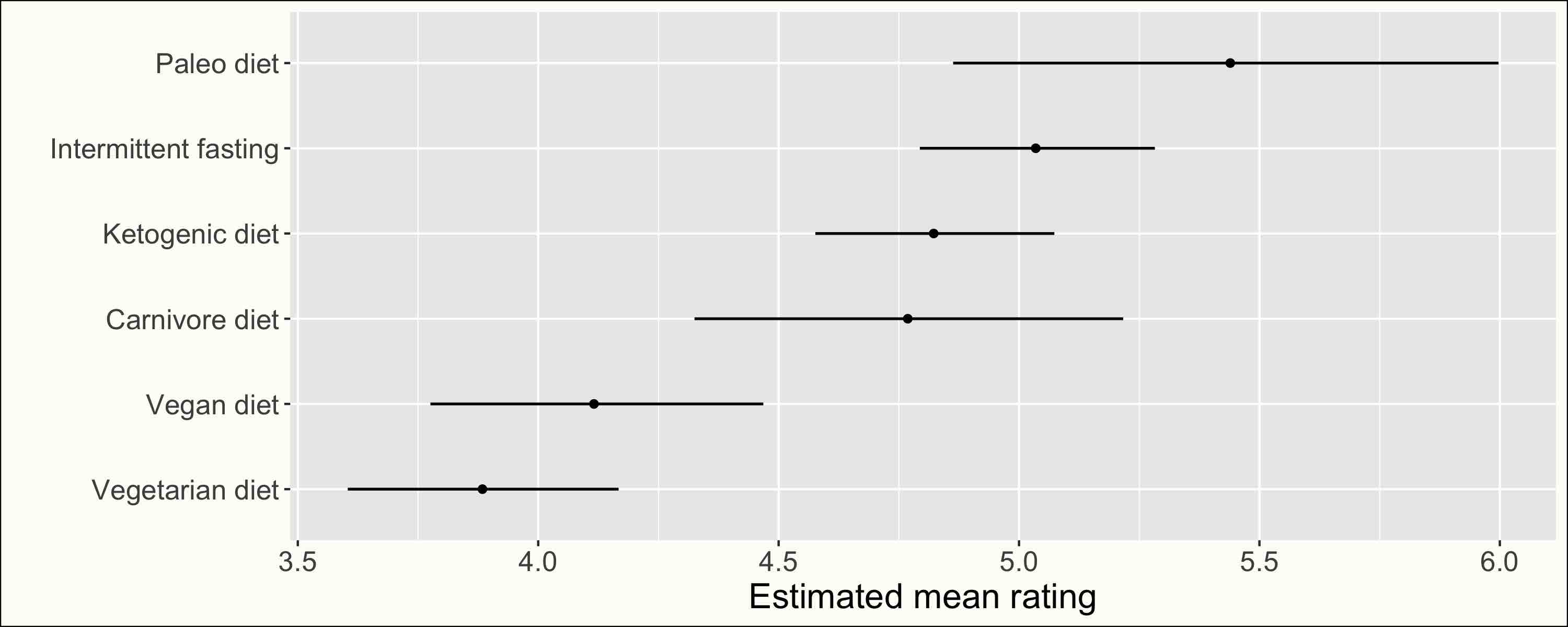

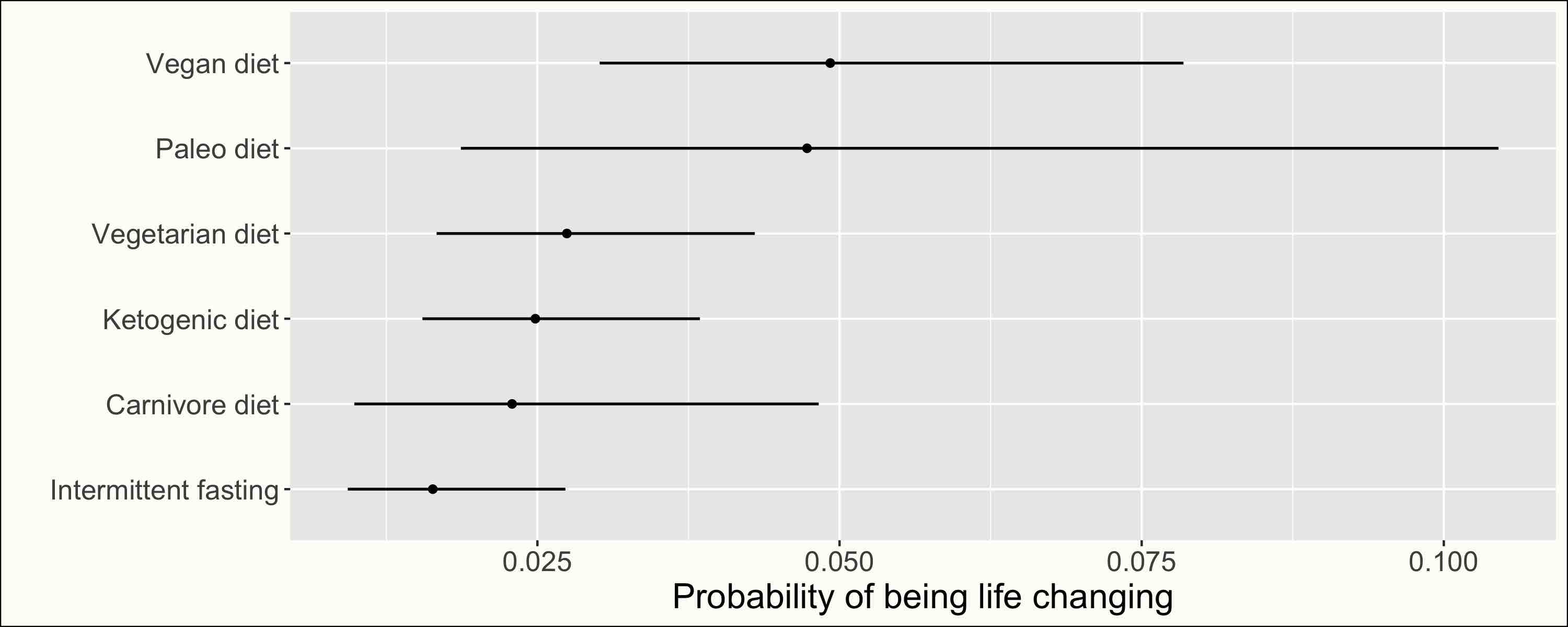

Diets #

See ratings

See issues

If sport and sleep are the nootropic low-hanging fruits, diets are the fruits you can maybe reach on tiptoes. For instance, the Paleo diet, with a mean rating of 5.4, is in the top-20, and Intermittent Fasting, with a mean rating of 5, is in the top-30.

Low carbs diets (Keto, Carnivore, Paleo) are rated much higher than Vegetarian or Vegan diets, though the Vegan diet is first for probability of changing your life, with around 5%[3-8% 95%] probability, similar to the Paleo diet.

Comparing issues reported, people often stop the Carnivore and Keto diets because of side effects, with a probability between 10 and 20%, much higher than the Paleo diet (between 2 and 10%), and somewhat higher than the Vegetarian and Vegan diets (between 7 and 17%).

The Paleo diet seems to be the winner here, though I’m wondering how its particular branding might be skewing results.

Other lifestyle interventions #

Meditation (mean rating = 5.8), bright lights in the morning (5), cold shower (4.7), and masturbation abstinence (4.1) also got impressive to pretty good ratings.

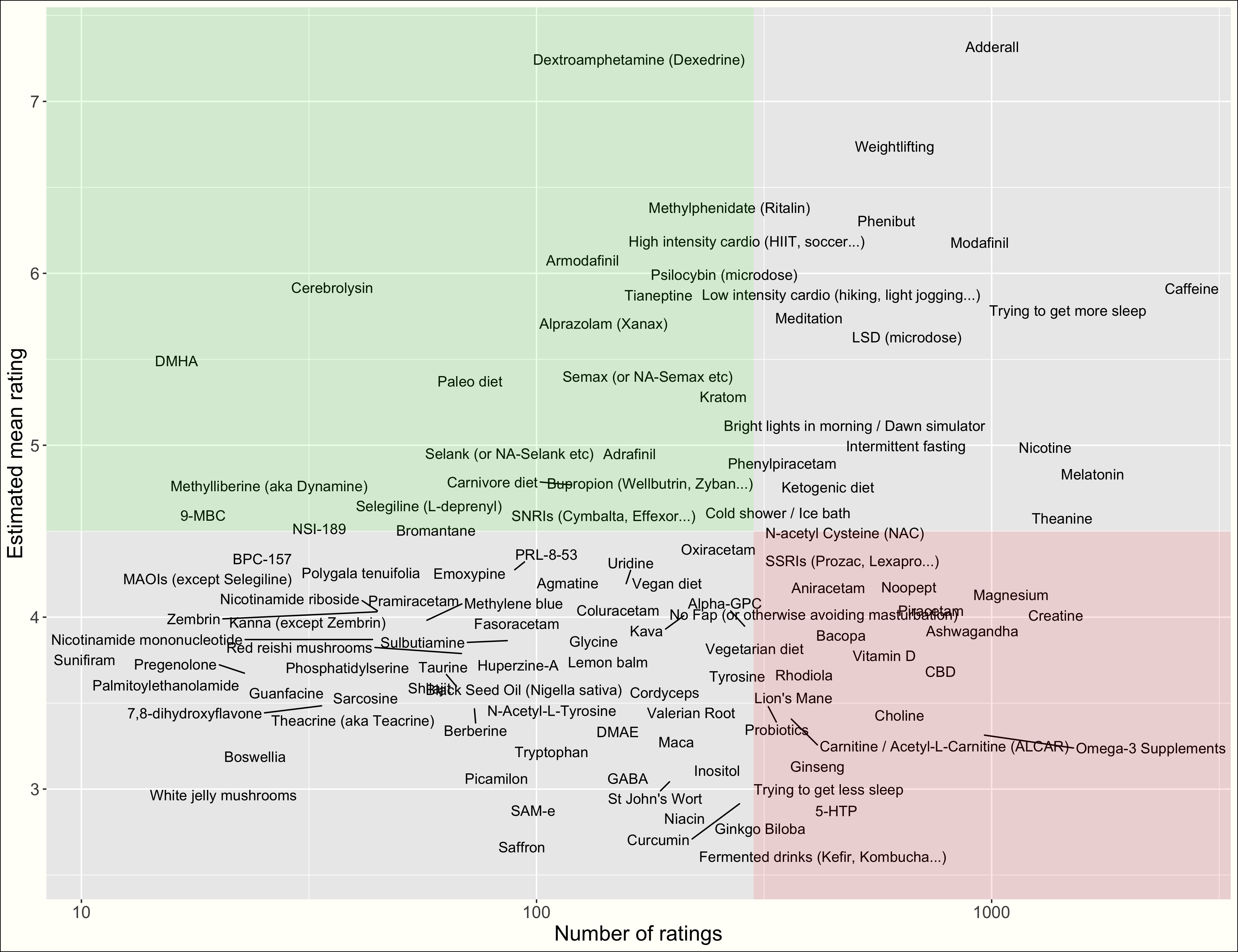

Most famous nootropics aren’t that good #

What are the first things that come to mind when you think of nootropics? Piracetam? Ashwagandha? Ginseng? Theanine? Most of these common nootropics actually got relatively poor ratings. This is compared to potent prescription-only medications like Adderall, but also to sport (see above) and to a lot of lesser-known nootropics.

The plots below shows all the common-but-mediocre nootropics (red rectangle) and the uncommon-but-great nootropics (green rectangle):

At least my data show that most substances in the red rectangle are pretty safe The risk of addiction seems tiny but do note that an estimated 5% of people develop persistent side-effects from Piracetam and Ashwagandha, and 20% have to stop Bacopa because of side-effects. I’m not sure most of these substances are worth trying. , but I would argue that in a lot of cases, it’s probably a better idea to try more potent nootropics a few times each until you find something truly life-changing I will develop this idea here .

Below, I will discuss most of the nootropics in the green rectangle Some of these nootropics, like Semax and Tianeptine, were already mentioned in the 2016 SSC Survey. .

Peptides are underrated #

See plots

Be careful, my estimates are a weighted average between the baseline probability of each issue and the specific probability of an issue for a nootropic. When the sample size is very small (like for Cerebrolysin), the model basically returns the baseline probabilities, which can be misleading if you believe that a nootropic like Cerebrolysin is not typical

In other words, if you have information about a nootropic, you’re no longer in a setting where nootropics are exchangeable, which is an assumption behind my hierarchical model.

.

Be careful, my estimates are a weighted average between the baseline probability of each issue and the specific probability of an issue for a nootropic. When the sample size is very small (like for Cerebrolysin), the model basically returns the baseline probabilities, which can be misleading if you believe that a nootropic like Cerebrolysin is not typical

In other words, if you have information about a nootropic, you’re no longer in a setting where nootropics are exchangeable, which is an assumption behind my hierarchical model.

.

Selank, Semax, Cerebrolysin, BPC-157 are all peptides, and they are all in the green “uncommon-but-great” rectangle above. Their mean ratings are excellent, but their probabilities of changing your life are especially impressive: between 5 and 20% for Cerebrolysin (which matches anecdotal reports), between 2 and 13% for BPC-157, and between 3 and 7% for Semax.

So why are they so unpopular? It may be because they’re really scary. Take Cerebrolysin:

Cerebrolysin (developmental code name FPF-1070) is a mixture of enzymatically treated peptides derived from pig brain, that you’re supposed to inject intramuscularly. If you’re not terrified yet, here are some scary stories.

Perhaps relatedly, these substances are mostly used in Russia and the former USSR and have an unclear legal status in other countries. This may also explain their unpopularity.

But are peptides really that dangerous? The plots above show that the addiction probabilities are tiny and that the tolerance and long-term side effect probabilities are below 5%. Some of these substances, like Cerebrolysin, have small sample sizes, so I quickly checked the literature. A Cochrane review from 2020 found that “Moderate‐quality evidence also indicates a potential increase in non‐fatal serious adverse events with Cerebrolysin use.” with a seemingly dose-dependent effect, while the other meta-analyses I found reported that “Safety aspects were comparable to placebo.” So it is a bit unclear, but I would be cautious, as I trust the Cochrane review more. For other peptides, we’re not lucky enough to have a Cochrane review, but the few studies I can find tell me they’re safe: “Semax is well-tolerated with few side-effects.", “BPC-157 is free of side effects.", “BPC-157 is a safe therapeutic agent.", “BPC-157 is a safe anti-ulcer peptidergic agent." We need more data on the safety of peptides but we can already say: “Probably less dangerous than they look.”

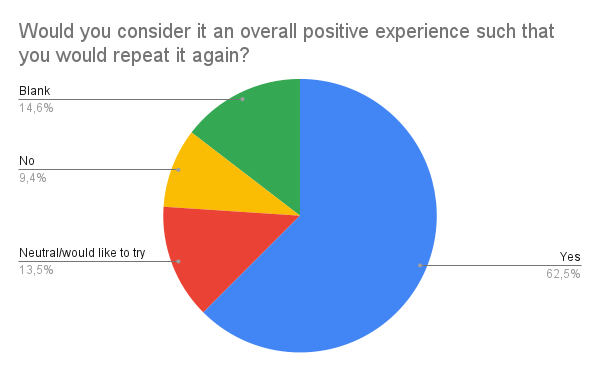

Bonus: Reddit user OilofOrigano has been collecting data on Cerebrolysin, you can check the results here. Results are quite positive (see below), though I think it was mostly collected on a subreddit dedicated to Cerebrolysin, so I would expect the results to be overly positive.

See results

Zembrin maybe isn’t interesting? #

In his 2020 Nootropic Survey, Scott Alexander found that Zembrin, a concentrated extract of Kanna supposed to improve mood and anxiety, was very highly rated:

Of 37 kanna users, 20 used Zembrin and 17 used something else. The subgroup who used Zembrin reported a mean effectiveness of 6.88, which beats out modafinil to make it highest on the list. After ad hoc Bayesian adjustment, it was 6.72, second only to modafinil as the second most effective nootropic on the list. This really excites me - I’ve felt like Zembrin was special for a while, and this is the only case of a newer nootropic on the survey beating the mainstays. And it’s a really unexpected victory. The top eight substances in the list are all either stimulants, addictive, illegal in the US, or all three. Zembrin is none of those, and it beats them all.

Unfortunately, this doesn’t really match what I found. In my dataset, Kanna (except Zembrin) and Zembrin have exactly the same Among the 15 people who tried both, the mean ratings were 3.8 for Kanna and 1.9 for Zembrin, which mostly tell us that it’s very noisy, but also that people may actually prefer non-Zembrin Kanna estimated mean rating of ~ 4 [3.3-4.7 95%], a bit below prescription SSRIs at 4.3 [4-4.5 95%], which are supposed to act in the same way.

How can we explain the difference? Maybe the surveyed population is a bit different in my case? But the simplest answer is surely sample noise. SA’s ratings are based on 20 people who tried Zembrin and 17 people who tried non-Zembrin Kanna. My ratings are based on 45 people who tried Zembrin and 49 people who tried non-Zembrin Kanna. Where it gets more complicated is that SA has subsequent data:

Based on these preliminary results, I wrote up a short page about Zembrin on my professional website, Lorien Psychiatry, and I asked anyone who planned to try it to preregister with me so I could ask them how it worked later. 29 people preregistered, of whom I was able to follow up with and get data from 22 after a few months. Of those 22, 16 (73%) said it seemed to help, 3 (14%) said it didn’t help, and another 3 (14%) couldn’t tell because they had to stop taking it due to side effects (two headaches, one case of “psychedelic closed-eye visuals”). Only 13 of the 22 people were willing to give it a score from 1-10 (people hate giving 1-10 scores!), and those averaged 5.9 (6.3 if we don’t count people who stopped it immediately due to side effects). That’s a little lower than on the survey, but this was a different population - for example, many of them in their answers specifically compared it to prescription antidepressants they’d taken, whereas the survey-takers were comparing it to nootropics. Although these findings are not very useful without a placebo control, they confirm that most people who take Zembrin at least subjectively find it helpful.

Do you trust the (a bit) bigger sample size, or the preregistration? Your choice!

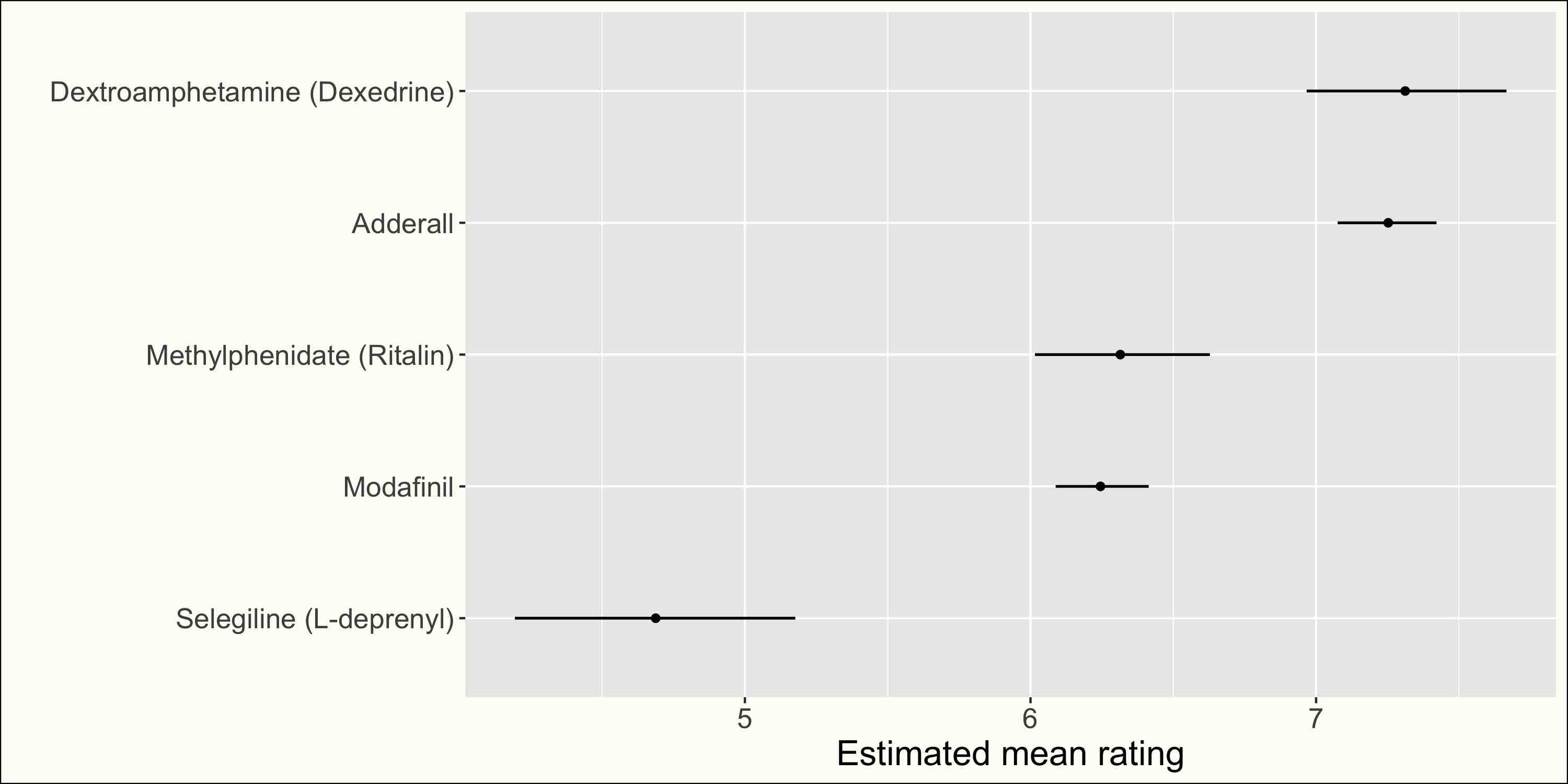

COMPARISONS #

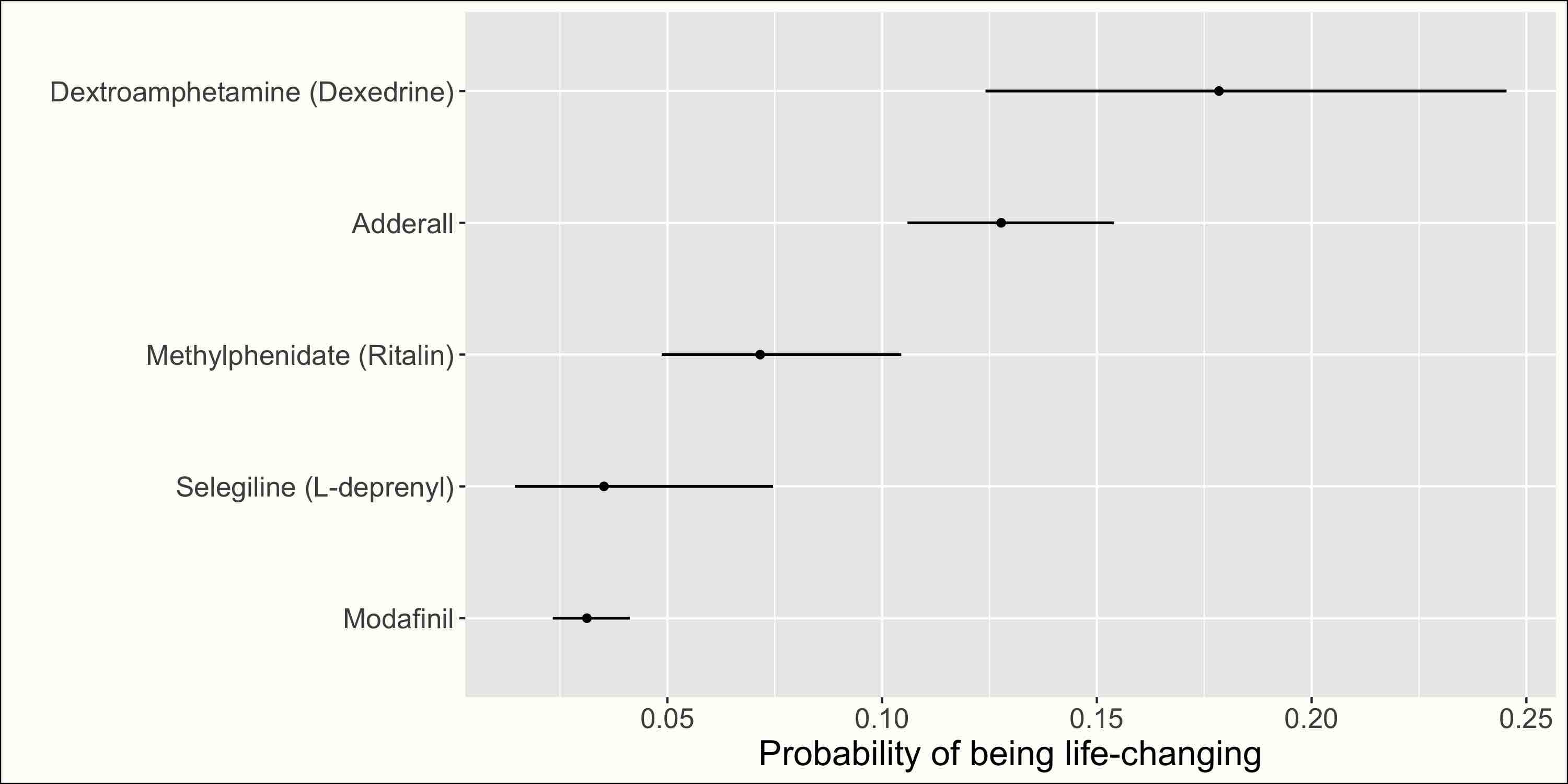

I hear you have ADHD #

See plots?

What is the best stimulant to take if you have trouble focusing? Adderall and Dexedrine have the best ratings. The latter is perhaps more likely to change your life, and both are far above Ritalin and Modafinil. I was surprised about Dexedrine, which I didn’t know about. Still, it does have higher patient ratings than Adderall on Drugs.com and the like, and there seems to be a debate in the literature on which is more effective for ADHD. This is all from this article by Scott Alexander, which is a great explainer on the different amphetamines used to treat ADHD (and a reminder that most of these substances are quite well-studied in the literature, and that you shouldn’t base any decision on my data!).

As far as potential issues are concerned, Adderall, Dexedrine, and Methylphenidate seem equivalent, except for their addiction probability: Methylphenidate has the lowest probability and Dexedrine the highest. I was wondering if the higher addiction probability of “Dexedrine” was due to the people using Dextroamphetamine recreationally (in my recommender system I had one item called “Dextroamphetamine (Dexedrine)"), but it seems to match the article linked above.

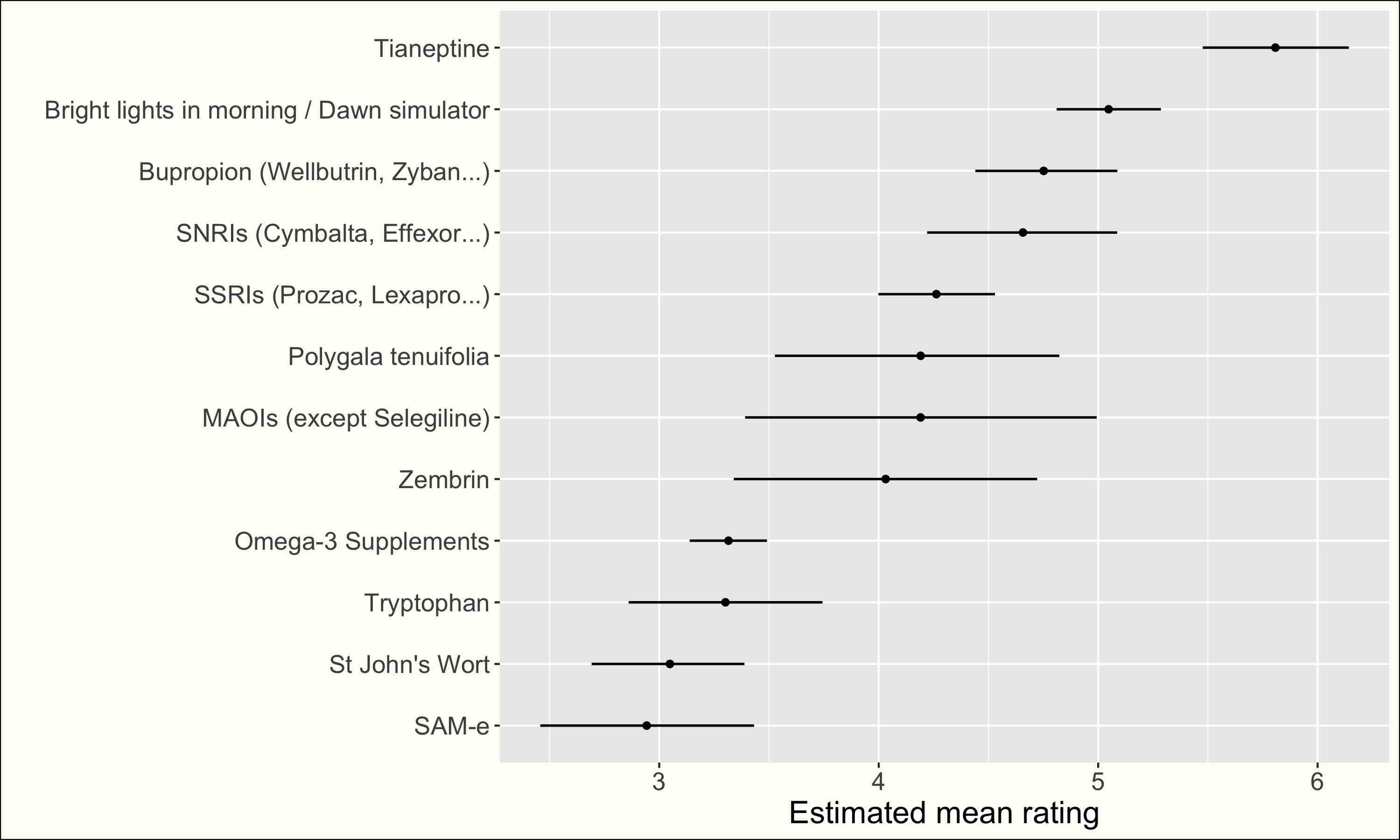

Antidepressants #

In the plot below, I’ve included most of the medications recommended for depression here, and most of the supplements recommended here.

See plots?

Prescription-only medications (including Tianeptine, which has a weird legal status) are rated higher than supplements Ratings might be somewhat unfair for more generic supplements: imagine fish oil cured depression as well as SSRIs but did nothing if you’re not depressed. In that case, the majority of non-depressed people would rate it badly, so its mean rating would be low. On the other hand, only depressed people will try SSRIs, which will thus get higher ratings (if only through regression to the mean). . This not very surprising, but somewhat in contradiction to what was mentioned in the Lorien article:

A more recent study finds 5-HTP [which is what Tryptophan gets turned into] works approximately as well as traditional antidepressants. Everyone agrees these studies are weak and low-quality and we can’t be sure of anything yet, but welcome to the world of depression supplementation.

More surprising, using bright light in the morning was ranked second. Interpreting these ratings is hard, as they are not specifically about depression, but this is still impressive.

Even more surprising, Tianeptide, a French anti-depressant, was far above every other medication I thought there might be a bias where the people who tried Tianeptine were the people who weren’t happy with classical antidepressants. I checked my data, but people who had tried Tianeptine didn’t seem to have tried many more antidepressants than other antidepressants users. . I’m not sure what’s going on here. Maybe people are expecting less of Tianeptine, which looks more like a nootropic than a medication (and is sort of available over the counter).

Most supplements users report few issues (except SAM-e and St John Wort, which have a high probability of side effects), while people using prescription medications report a lot of side effects. For instance, for Bupropion and SSRIs, I estimate a 30 to 40% probability of side-effects making you stop taking them. For SSRIs, there’s a 20 to 30% probability of having long-term side effects. This is quite scary. Tianeptine users report way fewer side effects, but as a counterpart, they’re more likely to get addicted/tolerant (I wonder how much of this is explained by people getting Tianeptine over-the-counter).

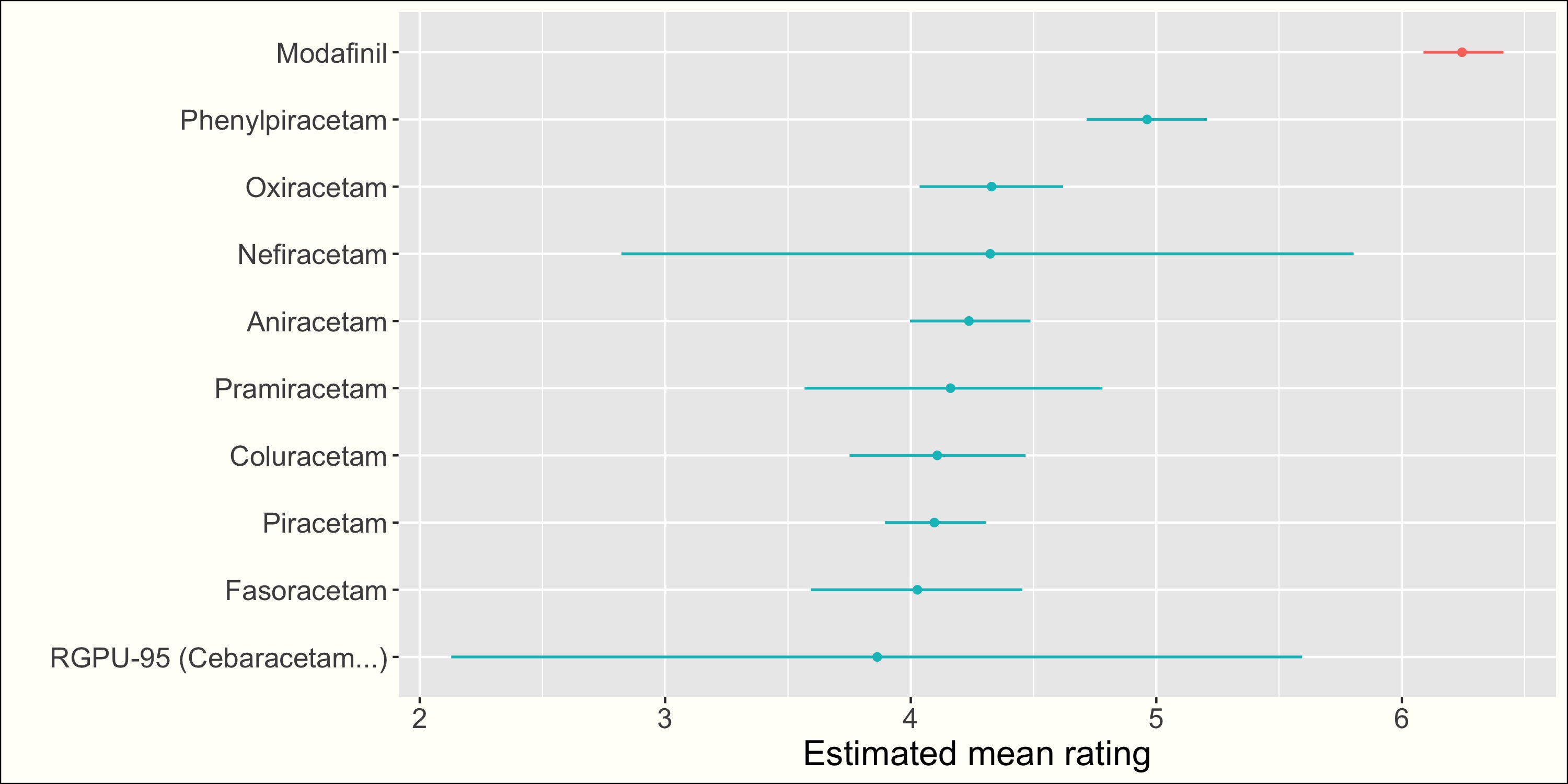

Racetams #

See plots?

All racetams got almost identical pretty low ratings, except Phenyracetam, which was rated much higher (but still way below something like Modafinil).

Racetams seem safe: they all got pretty much the same (low) probabilities for all issues, except for Phenylracetam, which has a tolerance probability between 10 and 20%.

BONUS #

How dangerous is Phenibut? #

From 2016 SSC survey:

Only 3% of users got addicted to phenibut. This came as a big surprise to me given the caution most people show about this substance. Both of the two people who reported major addictions were using it daily at doses > 2g. The four people who reported minor addictions were less consistent, and some people gave confusing answers like that they had never used it more than once a month but still considered themselves “addicted”. People were more likely to report tolerance with more frequent use; of those who used it monthly or less, only 6% developed tolerance; of those who used it several times per month, 13%; of those who used it several times per week, 18%; of those who used it daily, 36%.

The figures I have are somewhat worse, but still better than I expected: I estimate a 5.5%[3-9%] chance of becoming addicted, a 6%[4-10%] chance of having long-term side effects, and a 20%[15-26%] chance of becoming tolerant.

I don’t have the data on dosage or use frequency, so these figures are hard to interpret. Still, the tolerance probability seems high. The addiction probability, while high, is a bit lower than I would have expected reading /r/quittingphenibut Maybe people don’t get addicted so often to Phenibut because everyone knows that Phenibut is dangerous and people are cautious? Maybe Phenibut addiction isn’t so common, but is nightmarish when it does happen? Maybe most people only tried Phenibut a few times? Also, the 95% interval does include 9%. Please be very careful until we’ve sorted it out!. .

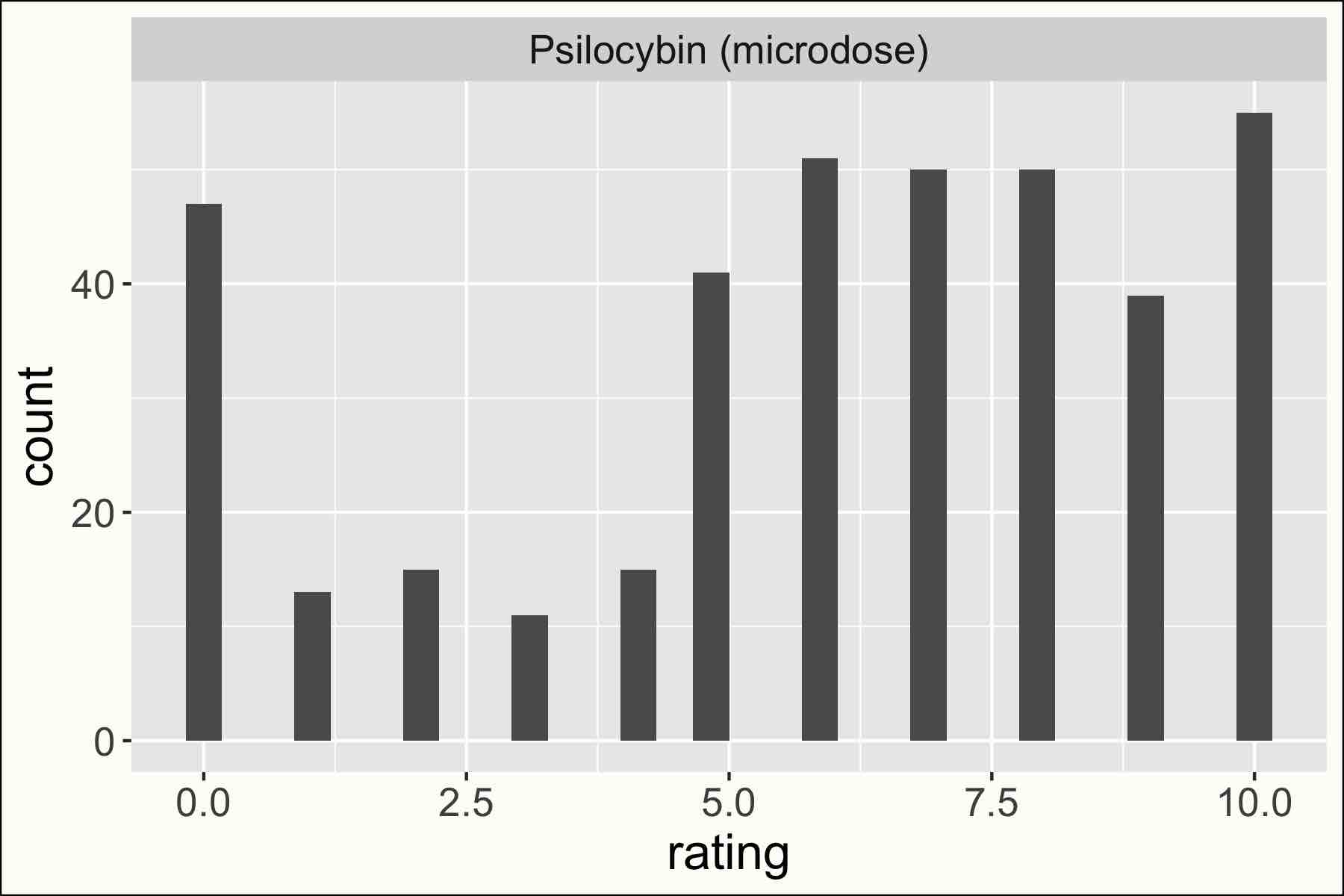

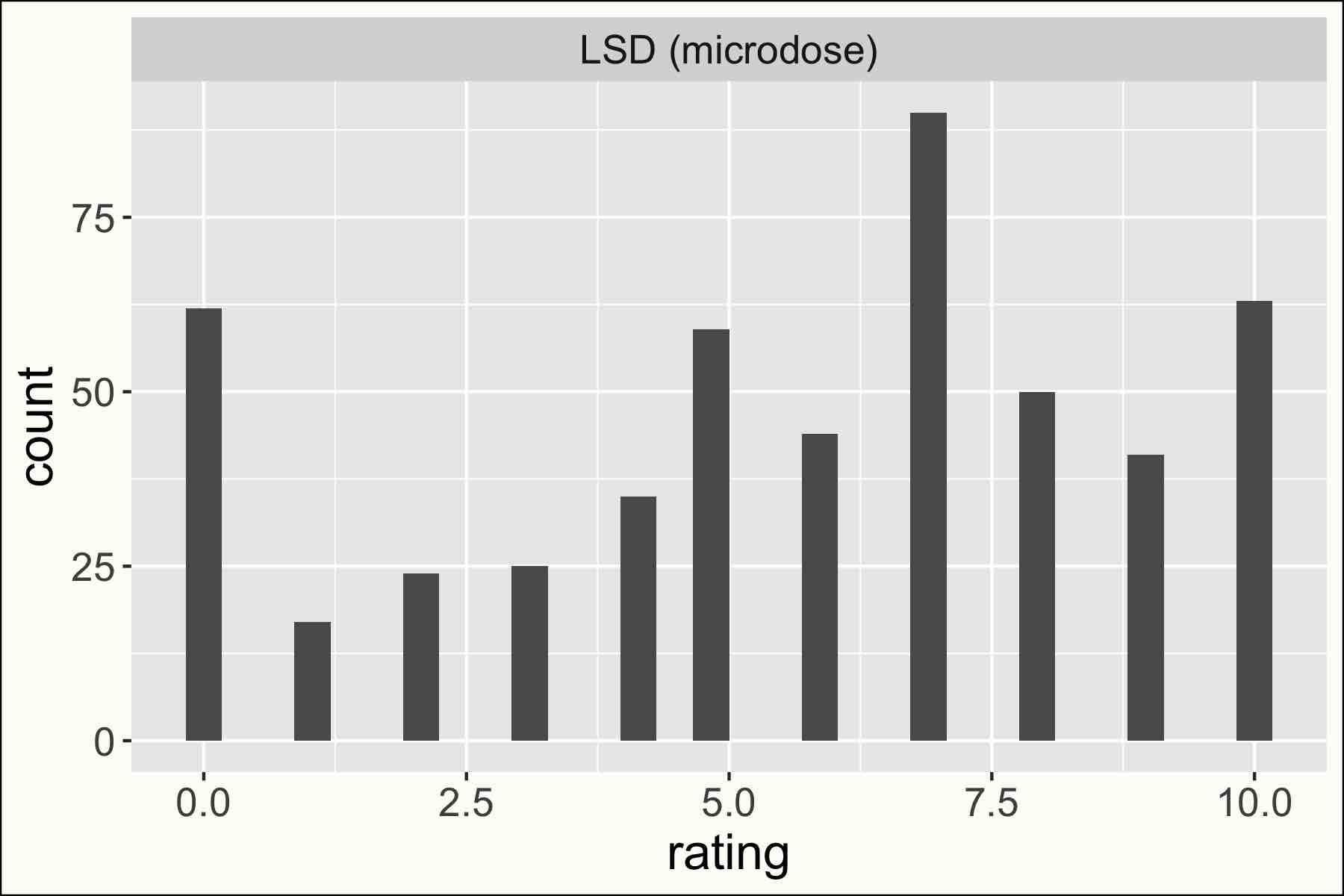

Does microdosing work? #

Microdosing psychedelics was quite highly rated, especially for Psilocybin: I estimate a mean rating of 5.6 [5.3-5.8] for LSD and 6[5.8-6.3] for Psilocybin, and a probability of changing your life of 6[4-8]% for LSD and 8[6-11]% for Psilocybin.

But be careful: this may be an example of the limit of self-reported, unblinded, data. Indeed, Gwern has been gathering RCTs on the subject (in addition to his N=1 experiment), and most show little to no effect I haven’t checked all of these, but it seems that these studies are underpowered to detect small (relevant) effects. Still, they seem to exclude strong microdosing effects. , except on things like visual intensity or time perception, and sometimes some effect on self-reported mood (how can you be sad when the colors are INTENSE?). People seem to be able to trip on a placebo, so I guess this is an area where we should be careful.

In my data, the ratings seem quite spread out, with many negative or neutral ratings (0) and many very high ratings. So there could be a very heterogeneous effect, There are two sources of heterogeneity here: individual variability and substance/dosing variability, which I expect to be high for illegal low-dose substances (and was relevant for the biggest RCT on psychedelic microdosing, which relied on participants' own drug supplies) which would thus be harder for an RCT to spot.

See ratings distribution

CONCLUSION #

While I got a lot of boring results (this is reassuring!), I was really surprised by a few things:

- Sport (especially Weightlifting) and sleep were really highly rated. More generally all “lifestyle interventions” were rated way higher than most famous nootropics like Piracetam or Rhodiola Rosea.

- Peptides like Semax or Cerebrolysin were really highly rated, but seem poorly known outside of Russia.

- Tianeptine was rated much higher than any other antidepressant. What is going on here?

- Zembrin maybe isn’t better than normal Kanna, as suggested in ACX 2020 Nootropics survey, which would be disappointing.

I’m sure there are a lot of interesting things I missed in my data. Feel free to explore them.

USEFUL LINKS #

-

N=1 experiments and useful information on nootropics by Gwern

-

Literature review on common nootropics by Sarah Constantin

-

Nootropics survey results (2016, 2020) by SlateStarCodex/AstralCodexTen

-

/r/nootropics beginner’s guide.

-

/r/nootropics research index

-

Information on Adderall risks by SlateStarCodex

-

Lot of information on supplements and medications here, by Scott Alexander (SlateStarCodex).

Subscribe to see new posts: